The finest backhanded compliment I receive, and the one I receive most often, is: "Good piece; I actually understood this one." A title like the one above is, on that evidence, an act of commercial self-harm. It guarantees that many readers will not open the essay, and that some of the brave ones will not survive this paragraph. But stay with me. This one is genuinely parseable. It is about where my investment thesis could be, not merely early, exaggerated, or temporarily embarrassed, but dead wrong. It is also, mercifully, not one of my heavier expeditions into the epistemic and ontic fabric of GenAI. The truer confession is that I became so taken with the phonetic symmetry of two words that beg to be paired, even if the dictionary has not yet granted them a joint tenancy, that leaving them holstered felt like a literary felony.

Any piece this drenched in self-referential meta-commentary owes the reader a quick defense of its tone. In Why We Write, I argued that long-form writing imposes a discipline on my investment stance that private thought never can. What I never quite justified was my militant refusal to provide a bullet-point summary. I could pretend it is because any reader can now command an LLM of choice to manufacture a synopsis calibrated precisely to their preferred level of laziness. The honest reason is that my streams of consciousness refuse to be corralled into a listicle. The essays are less about arriving at a tidy destination and more about the digressions and the diversions by which the argument arrives. Parading this during a self-audit borders on the pretentious, perhaps the boastful. But, as some readers have noticed, I treat prose much as I treat my generated images. As a laboratory. I recently took perverse pleasure in adopting the joyless, beige syntax of a traditional macro-analyst just to argue that AI could be rapidly turning Seoul and Taipei into what the Valley is to the internet, Detroit is to autos, and Bengaluru is to services.

Which brings me to another incurable habit. Shamelessly shoehorning references to my own back catalog so those essays do not suffer the silent, undignified death that usually arrives a few hours after the email is sent. Once again, I digress, delaying the dive into the uncomfortable.

Of the New Words

GenAI is not merely changing what machines can do. It is mugging the language. An "agent" can now enter a sentence without summoning either James Bond or a real-estate broker. "Tokens" no longer require an apology for crypto. "Context" has become something one stuffs into a machine rather than a thing one provides to a spouse before losing an argument. Old words are being reassigned, new ones minted, and ugly ones acquiring market caps. Tokenmaxxing, vibe-coding, slop, p(doom): the vocabulary of this cycle already sounds like it was assembled by a sleep-deprived engineer, a meme account, and a Tudor pamphleteer. Some are newly minted, some are newly mainstreamed. So I am going to press two more into service. Pejoration and perjuration may not be the cleanest pair in the language, but they capture something I keep seeing and something I must now examine in myself.

I do not speak Punjabi, but I once heard a phrase, roughly remembered as "twada kutta Tommy, sadaa kutta kutta," and loved it immediately. The meaning is simple: your dog is named Tommy; my dog is to be treated like a dog. Eight syllables, one civilization's worth of hypocrisy. It is the asymmetry of regard in its purest form. Affection for one's own evidence, contempt for the other fellow's identical evidence, and a moral vocabulary conveniently arranged around ownership.

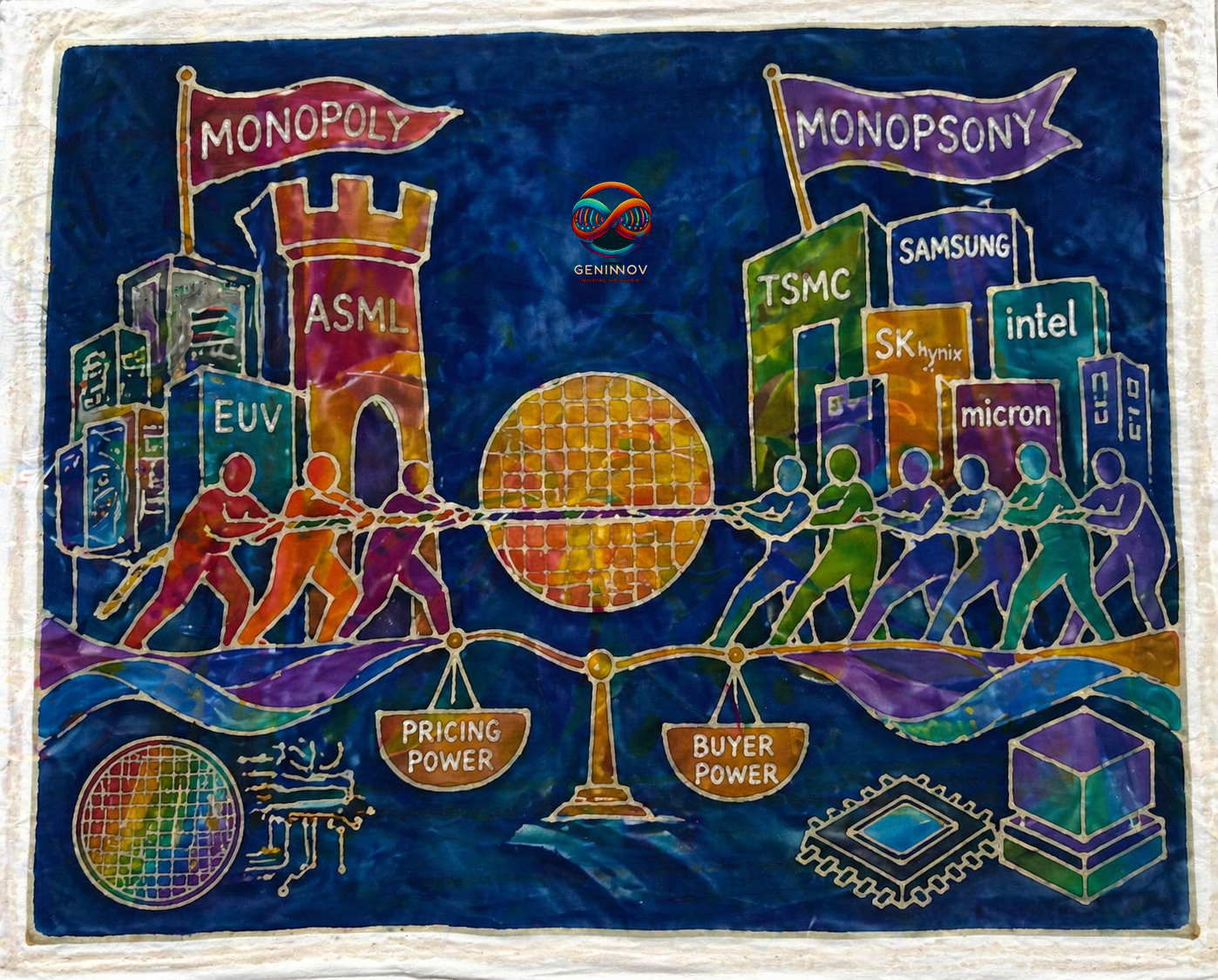

That is what I mean here by pejoration. AI's winners are Asian companies turning demand into revenue, revenue into margins, and margins into numbers large enough to embarrass many software dreams. Yet because these profits appear in the supposedly less glamorous layers of the stack, they are dismissed as "picks and shovels" or "AI infrastructure," as if the money becomes less real when it is earned by the people selling the only thing everyone else needs. One’s margin is hailed as platform economics. The others’ binned as a cyclical commodity windfall.

Perjuration is the companion offense. Not merely being wrong, but repeatedly editing the record so that one's wrongness never has to appear in court. The standard hype-screamer arrives with a list of things today's AI "cannot do," garnished with the inevitable totems of the South Sea Bubble and the dot-com peak. The diligent ones will quietly perjure themselves, silently trimming that list every few weeks as models leap forward and share prices climb. The worst offenders go further. They shift the chart's starting point, recalibrate the axes, and engage in full-throated perjury to force the historical pattern to declare that the stock market must peak this quarter. They are not forecasting the future. They are quietly rewriting the present for refusing to resemble their favorite historical overlay.

It is delightful to examine other people's dogs. Today, unfortunately, mine is on the table. I am lucky enough to have no audience I must flatter, no sales team requiring a morning anthem, no investment committee demanding that the previous memo be retroactively sanctified. I need only one thing. Positions that make money. That makes the exercise both simpler and more brutal. Where am I calling someone else's Tommy a dog? Where am I moving my own axes, changing my own definitions, and protecting my conclusions with fresh stationery?

This, then, is not the usual "what could go wrong" pageant. Valuation, geopolitics, momentum, macro, and the other familiar guests arriving in their assigned costumes. It is an attempt to ask what those of us on the right side of the AI trade may now be too well-paid, too vindicated, or too drunk on the price screen to notice. The danger is not that the bears have been wrong. The danger is that being right for long enough can become its own method of becoming wrong.

Risk 1. AI Overperforming

My first pejoration is the ease with which I have pooh-poohed regulatory risk, leaning on what I have long considered the cleanest and most brutal law of competitive dynamics: if I don’t do it, the person I hate the most will.

That sentence has done a lot of work for me, perhaps too much. Until recently, it was safe to treat political speeches, regulatory drafts, and safety summits as theatre. Broad in language, vague in enforcement, and mostly harmless to the actual direction of travel. For years, I have argued that all the well-flagged AI risks dominating conferences and podcasts, in job displacement, social harm, misinformation, privacy and sovereignty concerns, are real but precisely the shocks the system is built to absorb. They are priced in. They are written about. They are white-papered to death. They produce hearings, lawsuits, compliance departments, insurance products, and very earnest slides. They do not stop the machine. They become part of the machine's budget. The reason none of them slows the technology is the cleanest piece of game theory ever devised (which bears repeating): If I do not do it, the person I hate the most will.

Inside that phrase sits a more important hidden message. The front end of the field, model-making, is one where progress relies almost entirely on scale, not on some rare, hoarded skill. At every higher level of scale, frontier models unlock capabilities nobody forecasted, and this scale-based improvement is available to anyone with the capital to persist. The hardware caveat matters but does not change the conclusion. Training the next frontier model is not identical to serving it to hundreds of millions of users every day, and a player with less hardware can still push the scale frontier, only more slowly and more expensively.

What changes this calculus is not a white paper. It is a sudden, highly visible catastrophe. An AI-facilitated cyber event massive enough to cripple core infrastructure in a major economy. Not a stolen database or a hacked system at a university like the one this week. A power grid. A payment rail. A nationwide hospital network going dark simultaneously. A water system. A cloud provider on which half the economy unknowingly rests. The morning after that, the regulatory conversation pivots from think-tank platitudes to emergency powers. AI development transforms overnight from a technology-led race into a permission-led crawl. Licences. Compute registries. Mandatory audits. Frontier-model freezes. Hardware export controls beyond the current regime. Criminal liability. Restrictions on open weights. Some ugly form of nationalization, or its softer modern equivalent of private ownership and public instruction. The exponential curve I am betting on does not bend downward because the technology hits a wall. It snaps because the rules change overnight.

Right now, the probability of this feels extremely low. Such scenarios belong to dystopian screenwriters and sensationalists. But low probability is not unchanged probability, and it would be a severe perjuration on my part to quietly keep shifting my arguments for why regulatory risk remains low while the alarm bells grow louder. I cannot say regulatory risk is irrelevant because regulators are slow, then because enforcement is weak, then because companies will route around it, then because bad actors will not comply, then because the market has already priced it, and still pretend I am making the same disciplined point. At some stage, that is not a thesis. It is a dog renamed Tommy every quarter.

Risk 2. Memory Mania

My second pejoration is the comfortable claim that nothing anyone can do, even with the brightest teams or any amount of capital in the world, can meaningfully reduce the global dependence on the fastest DRAM and NAND.

I am still peeved that Silicon Shock has not taken off as a term. Maybe I should content myself with the fact that they are quietly turning relatively overlooked companies into the world's most profitable, and be content with the lesser prize of some of the underlying stocks turning into ten+ baggers in barely a year. One must slap oneself with a stick and learn to suffer these indignities.

The immutability of supply against demand keeps surprising to the upside with no end in sight. But I never expected DRAM and NAND prices to rise the way they have in the time they have. Contract DRAM is up multiple-fold from where the cycle began, and the screen is now the daily reminder that the supply curve we drew was less a forecast than a floor. Every memory bull, including this one, is currently being paid by a price line that nobody in the room would have written down two years ago without first checking whether the room contained adults.

I felt little need to study SRAM in detail. The wafer math did the work. The capacity required to displace even a sliver of installed DRAM with on-die or near-die SRAM exceeded the fab capacity of the entire planet. So I could safely wave through the panic around Turboquant, Google's TPU memory hierarchies, Musk's Terafab ambitions, and whatever the incumbent memory makers' raised capex plans turn out actually to deliver. Each one looked, on the back of its envelope, too small or too slow to matter. The danger is that I have converted a very good constraint into an eternal one.

The demand side is where the perjuration danger sits more squarely. A bull case built at $2 is a different creature at $15, and I would be perjuring myself if I kept repeating the same bullish arguments at memory prices several multiples above where I first made them. I have never liked the time-invariant proclamations of those calling for a 2000-style collapse year after year, and I owe my own readers the courtesy of not doing the same here. At some point, a memory price surge stops being a tax only on legacy gadget demand or a rationing mechanism for buyers who cannot pay, and becomes a behavioral accelerant. Hyperscalers fund efficiency teams that would otherwise have remained PowerPoint appendices. Model architects revisit KV-cache, quantization, and sparsity with the urgency that only a price line provides. Hardware roadmaps that would have died in committee on cost grounds suddenly find a sponsor. Most of these efforts will fail. One or two will not.

For now, I would like to believe that the breakeven for those efforts remains comfortably above where we are. The bull case has room. But the breakeven moves every quarter, and the longer the surge runs, the more capital and talent it pulls into the search for an alternative. Even the memory makers themselves, drowning in the hundreds of billions they are making, must be eyeing the unwanted attention that comes with being the accidental bottleneck of the global economy, and asking how long they can profitably do nothing to unblock the pipe. I do not yet see the architecture that displaces a meaningful slice of DRAM. I would be perjuring myself if I claimed I had looked hard enough to be sure none is coming.

Risk 3. Whither Robotics

My third pejoration is the techno-optimist's most seductive article of faith. The physical bit will sort itself out.

Like most people who watched foundation models graduate from party trick to colleague, I assumed the cognitive bottleneck was the one holding robotics back. That premise has aged well. Vision-language-action models work in ways they did not eighteen months ago, and the consensus in my circles, mine included, is that the rest is plumbing. Actuators, batteries, sensors, supply chains. Hard, but the kind of hard that engineers solve. Once solved, the leap from a thousand units to a million is the kind of industrial vandalism the Shenzhen ecosystem commits before lunch. Hence the comfortable position I have held for two years. Robotics is coming, and I will pick it up along the way as the semiconductor theme begins to lose its shine.

The trouble with a comfortable position is that remaining comfortable for two years in this market usually means you are the mark. Embodied AI, as a phrase, is older than several models that have already died of middle age. And in those two years, beyond a respectable growth rate on a microscopic base in narrow product categories, there is no meaningful change in the physical domain anywhere on the planet. Yes, the internet is bursting with heavily edited clips of borgs jogging alongside their engineers, folding laundry, doing backflips, and generally auditioning for venture decks. But warehouses still look like warehouses. Construction sites still look like construction sites. Hospitals, factories, kitchens, and care homes have not acquired a robot layer visible to anyone without a camera crew. The humanoid count in any major economy is a rounding error so small it only looks impressive when measured against a slightly smaller rounding error somewhere else. The cognitive ceiling has lifted. The physical floor has not moved.

This is exactly the sort of long-run story I have disliked elsewhere, whether the topic is space data centres or practical quantum computing. The destination is inevitable, every microscopic milestone is inflated into macro-progress, and every disappointing macro datapoint is excused with another genuflection to the long run. I do believe robotics will become one of the largest industries the world has ever built. I also believe that holding that conviction quarter after quarter, while the physical world stays stubbornly unrobotic, is precisely how investors end up right on the destination and wrong on every name along the way.

It would be a perjuration to keep repeating "cognitive was the hard part" in year three, and year four, and year five, without once asking whether the physical part is, in fact, the hard part. The investable mistake may be staring at the shiny chassis when the real money lives somewhere quieter. Robotics' equivalent of HBM. The boring, scarce, enabling layer without which the glamorous layer cannot scale, and which everyone else is too distracted by humanoid demos to underwrite.

The same warning applies to adjacent miracles. The belief that LLMs will quickly deliver perfect autonomous vehicles and a visibly shrinking population of human drivers may be an even cleaner case of pejoration and perjuration than robotics. Faster drug discovery is another candidate. In each case, the cognitive leap is real. The investable mistake is assuming the physical, regulatory, biological, or deployment world will be courteous enough to keep pace.

Risk 4. Power is Not the Prize

My fourth pejoration is the time-invariant conviction that no power name will ever become the most profitable company in history the way a silicon name might, and that the entire electricity-shortage narrative owes more to its narratability than to its underlying severity, especially the way it gets played in the equity market.

The evidence has been sitting in plain sight. No data center, anywhere, has gone dark. Hyperscalers have queued, complained, paid more, signed PPAs with reactors that have not yet been built, and parked gas turbines on trailers next to buildings like billion-dollar backup singers. They have not run out of power. Substations photograph beautifully. Wafer queues do not. A senator fulminating about a transformer backlog harvests clicks that an HBM3E shortage never will. Headlines warning of civilizational electricity shortfalls keep arriving at roughly the same pace as Q3 capex guides keep going up. Many power-sector picks-and-shovels are seeing fatter order books, but not with the pricing power or volume leverage of our favorites. Their valuations, meanwhile, have run ahead anyway.

Here is where I have to flinch. I have spent considerable ink accusing others of perjuring themselves by waving away Asian AI earnings as "picks and shovels." Re-read the previous paragraph in that light. I have just dismissed an entire industrial segment as a narratable sideshow because no single name in it can wear the crown. That is the same move. A category I have been pejorative toward, on the grounds that nobody in it gets to be the biggest winner. The reasoning is not analytically wrong. It is structurally identical to the reasoning I have spent two years mocking in others.

The evidence that should force me to reprice is narrower than the panic suggests but sharper than my dismissiveness allows. A hyperscaler publicly canceling a planned data center build for power reasons. A state government rationing new interconnect approvals in favor of residential or industrial load, explicitly against compute. A restarted reactor slipping from operational to deferred. Long-dated PPA pricing beginning to invert hyperscaler unit economics. None of these validates the panic. Each validates something more uncomfortable. That I have been right about the segment producing no trillion-dollar champion, and wrong about the constraint's other implications for the pace, geography, and cost of AI innovation. The first claim is about the prize. The second is about the cost. I have only ever audited the first.

The geopolitical layer is sharper still. A serious energy shock and authorities deciding which industries face the most severe supply cuts are no longer idle scenarios, even if they remain improbable. Data centers are not, and honestly do not deserve to be, the constituency that wins that allocation argument.

It would be a perjuration to keep waving away every geopolitical worsening on the grounds that no data center has gone dark yet, and that the AI innovation wave can scale independently of the physical world. Even beyond geopolitics, the assumption that engineering and capital always arrive on time has been wrong before in adjacent industries. If I am still repeating the same dismissive line three years from now while a meaningful slice of the planned hyperscale build sits in interconnect queues, I will not have been a realist. I will have been the person who confused the absence of dark data centers with the absence of a binding constraint, and who missed a perfectly good reprice in the power layer because the prize was not where I was looking.

Risk 5. Living in the Nominal World

My fifth pejoration is the deeply held conviction that we now live in a nominal world: volume growth has quietly become the headache of economists, policymakers, and anyone charged with overall welfare, but is no longer a central concern of equity markets. I hold this view strongly enough that, instead of the customary one- or two-paragraph setup followed in the previous four sections, I am going to take several. Then we will get to the underbelly.

If I were still wearing my macro-strategist hat, my notes would be as obsessed with the post-COVID behavioral shift among the world's price-setters as they currently are with the semiconductor names. I slip into this theme whenever I get the chance in these letters, and have devoted entire essays to it.

Set aside the printed inflation numbers. The deeper truth is that companies have quietly stopped competing on volume growth or market-share capture and have started working double-time on maximizing revenue through innovative pricing structures. They do not need to sell more toothpaste, software seats, memory bits, or compute. They need to sell the same thing in a cleverer wrapper, at a higher price, with a smaller allowance, a better tier, a surcharge, a bundle, a priority lane, or a mysteriously shrinking entitlement.

As I type this paragraph, ChatGPT is asking me, for the first time, whether I would like a faster response in exchange for spending a higher fraction of a shrinking token allocation, at the same monthly price I paid last year. Shrinkflation has finally come for the matrix.

As I argued in my award for the most far-reaching business decision of last year, the memory makers operated in a cartel-like manner, without the inconvenience of an OPEC-style cartel, to quietly retire a generation of memory products. That single decision midwifed the conditions we are living through now.

The behavior is not confined to memory. TSMC, the closest thing the industrial world has to a true monopolist, is in no apparent hurry to add capacity merely to make its customers' lives easier. SaaS companies, sitting on cohorts that should statistically be churning at the margin, keep raising prices to cover costs and bolster margins, untroubled by the customers they may be losing. There must be exceptions to my pejorative summary that we now live in a world of no sale signs. I have struggled to find any that matter.

The stock market is the financial market most leveraged to the notionality of nominal growth, and I find evidence of this thesis every quarter in consistently expanding EPS, not just in the United States but everywhere a listed company is trying to clear a buyback hurdle.

The position is held with conviction. Which is precisely why it deserves the audit.

The first place I might be perjuring myself is on the durability of the price-setter behavior. I have written about this shift as if it were structural, without worrying about where it could become excessive. Companies discovered, during a rare supply shock, that demand was less elastic than they had feared, and have been running the experiment ever since. And, experiments end. They end when extortionate pricing turns into societal pain visible enough to force policymakers to bring down the gavel. Politics may forbid the deployment of the standard monetary or fiscal toolkit, and that is exactly what will make the political wrath more unpredictable and more dangerous. If geopolitics forces price controls to emerge in one domain, or if fiscal constraints lead to redistributive tax policies on windfall gains, we can see a repeat of what has already happened in global trade, where the rulebook is changed to force change in the unsustainable. The market will call it policy risk only after the multiple has already been noticed.

The second place is where the extent of demand destruction becomes impossible to ignore, even in equity markets. The innovations of the current era have begun to hurt employment, albeit so far more visibly in faraway places and marginal communities, viewed from the comfortable perch of the profitable corporate. Add cost-of-living inflation as its companion, and a sudden break in demand could ignite an asset-price deflationary cycle in which equities are swept in through default-related extraordinary losses at the beginning. Financials remain a large part of the market and are always susceptible to massive reversals in the face of real-world decline, regardless of how robust their stress tests looked when times were good. In our view, the AI world is making more than adequate ROI in aggregate once we remove pejoratives like "picks and shovels," but we are ignoring the critical middle layers that the pessimists rightly point out as highly funding-dependent.

Once a vicious cycle starts and fear sets in, the winners' aggregate gains do not matter a whit. Their under-pressure managements will be the first to cut the oxygen lines of the intermediaries critical for their own overall profitability, purely to reassure everyone about the rationality of individual funding decisions. The accounting circularities through which some of those intermediaries have been kept alive so far will themselves become harder to defend in front of newly empowered regulators and far jumpier markets.

The ongoing innovation wave is a circular story made possible, in part, by a world without material macroeconomic turbulence. I have written every other risk in this piece as if that quiet would hold. Which is to say, I have built four self-audits on top of the one assumption I never audit. It would be a perjuration to keep treating the absence of that turbulence as a permanent piece of furniture.

Conclusion: Living in the Fast Lane

In the absurdly fast-moving worlds of geopolitics, innovation, and financial markets, time-invariant arguments are not principles. They are expensive habits. It is easy to spot this stubbornness in those drawing the opposite conclusions to mine, the AI-bubble callers and the TMT-chart-wavers, the person forever arriving at the year 2000 by redrawing the axis. The harder and more useful task is to find it in oneself. That is especially true for those of us with money actually at risk. It is easy, in the abstract, to hypothesize how nobly one would behave in adversity. In practice, adversity arrives with a drawdown, a client call, a better-sounding bear case, and the humiliating possibility that the people one mocked were early rather than wrong. I may never abandon my long-term belief in innovation, which remains one of the world's rare credible growth themes, but conviction is not a license to stop updating. It is also not a discipline one can fully self-hedge into the book. A concentrated long-only portfolio of the kind I run cannot insure against every adversarial reading of its own thesis without becoming an index, and at some point the only available defense is vigilance, the kind that, in listed markets, at least permits a change of opinion before too late. Self-audit matters for those of us not merely engaged in the commentary warfare of forecasts and counter-forecasts. Every position I hold today that I also held before Claude Code began visibly altering software work, before the Strait of Hormuz became a live market variable, or before semiconductor names doubled, is a candidate for both pejoration and perjuration. The minimum honest discipline left is to keep reading those who see the world differently, may not be to borrow their conclusions, but to test whether mine still deserve the confidence I have given them.