Now, the Hardware Disruptions

Wiwynn, a rack builder, partners with Ayar Labs, a silicon-photonics specialist. Optical connectivity is no longer a module business; it is a system-design problem that customers now own. One might brush this aside in isolation. Then, in roughly the same week: ASML announces an intention to enter the packaging equipment market; Nvidia places $2 billion each into Lumentum and Coherent in deals that look less like investments and more like strategic custody; and BESI, the world's dominant hybrid-bonding equipment specialist, surfaces as the reported subject of acquisition interest from equipment giants with far deeper pockets. Together, they ask a question the hardware industry has not had to answer before: whether sub-sectors and sub-industries are under existential pressure not just in software, where disruption from above is already well understood, but also in the physical layers of the stack.

The old hardware map was orderly. One company designed chips. Another supplied IP. Another packaged them. Another made substrates, optics, passives, retimers, memory controllers, or assembly lines. Another put the pieces into a server, another into a rack. AI is making that map look old-fashioned. The pressure is no longer merely competitive. It is architectural.

What has changed is not only the size of the winners but the reason they keep moving. In earlier hardware cycles, expansion into adjacent layers was about margin capture or bargaining power. In AI infrastructure, it is about physics. Every interface has a cost. Every handoff adds latency, power loss, thermal complexity, or yield drag. When the rack becomes the real unit of compute and cooling becomes part of performance rather than plumbing, the biggest players are pulled into neighbouring layers as a matter of engineering necessity.

The right phrase is profit-pool migration. Value is moving from the one excellent block toward the group that can make five blocks behave like one. The people under pressure are not obscure minnows. They are the respectable middle: optical module vendors, IP licensors, packaging specialists, OEMs, ODMs, and businesses whose economics depend on the world staying modular.

In software, this disruption is visible to everyone; large language models hollowing out the middleware layer is no longer a barely-noticed phenomenon. What is less appreciated is that an equivalent force is operating in hardware, with less fanfare and its own kind of structural severity. The boundaries between chip design and system integration, front-end and back-end, silicon and optics, compute and memory are dissolving, not primarily because giants are greedy, though that too is a factor. Let's begin with the size and needs of today’s giants.

The Capital That Changed Everything

There is a plot device, most memorably deployed in the 1985 film Brewster's Millions, in which a man must spend a fortune in a fixed window without accumulating assets, which he finds enormously difficult. Imagine the plight of someone who may suddenly have to think about US$100bn?!

South Korea's two memory giants are on track to individually approach or exceed $100 billion in operating profit in 2026 alone. These are figures that potentially exceed each company's cumulative historical earnings. Even if one allows for roughness, capex, debt cleanup, treasury-share cancellation, buybacks, and the ordinary obligations of a large listed company, both of these companies may still need divisions of people focused on what to do with the rest. TSMC is not in a different boat either, despite over USD50bn in capex. These are not normal corporate surpluses.

The financial significance is not the numbers themselves. It is what those numbers do to competitive behaviour. A company generating this kind of cash can fund adjacent technology bets, absorb years of qualification cycles in neighbouring markets, subsidise hyperscaler co-development at cost, and wait out a specialist competitor that cannot. A mid-tier specialist — a packaging equipment maker, a connectivity IP licensor, a server integrator — faces none of these advantages. It must choose one technology direction, manage quarterly margin expectations, and depend on open-market competition to protect its position. The cost-of-capital gap between the giants and the specialists has never been wider, and it is widening further as hyperscaler demand functions as an implicit guarantee on giant balance sheets that no specialist can replicate.

This asymmetry matters because it turns what once looked like organic expansion into structural disruption of neighbours. The giant is not expanding to eat the specialist's lunch. It is expanding because co-design across more of the stack is the only way to capture the next level of system efficiency. The disruption of the specialist is a consequence, not a goal. That distinction makes little difference to the specialist.

The Archetype Nobody Names

Before the themes, it is worth naming the company that has made this pattern most explicit: NVidia. One can, of course, write a series of articles on its footprint expansion with every product cycle, but as we said, the article is not about what the giants are doing by themselves, but about who is getting disrupted. Still, a quick review of NVidia’s intentional expansion is important to see how it is directly and indirectly inspiring other giants.

Nvidia began the current decade as a GPU company. It is now, by its own characterisation, an AI factory company. The journey from chip to rack, through NVLink switch fabric, through the NVL72 architecture, through Spectrum-X networking, through the Omniverse simulation platform, through physical AI and the Isaac robotics stack, is a textbook study in how a company can redefine its unit of value without announcing that it is displacing the businesses around it. At each step, somebody nearby lost design authority, pricing power, or category relevance. The OEM lost rack architecture control. The networking switch vendor lost the scale-up fabric. The optical transceiver maker might be the next in line as Nvidia's investments in Lumentum and Coherent reshape who controls what in the optical supply chain.

Jensen Huang said at CES 2026 that "manufacturing plants are going to be essentially giant robots" and that Nvidia "builds entire systems now because it takes a full, optimised stack to deliver AI breakthroughs." That sentence, delivered casually, contains within it the disruption thesis for at least six sub-industries. Every other giant with the means to make a similar statement is either already executing or beginning to think about executing. The pattern is Nvidia's. The consequences are distributed.

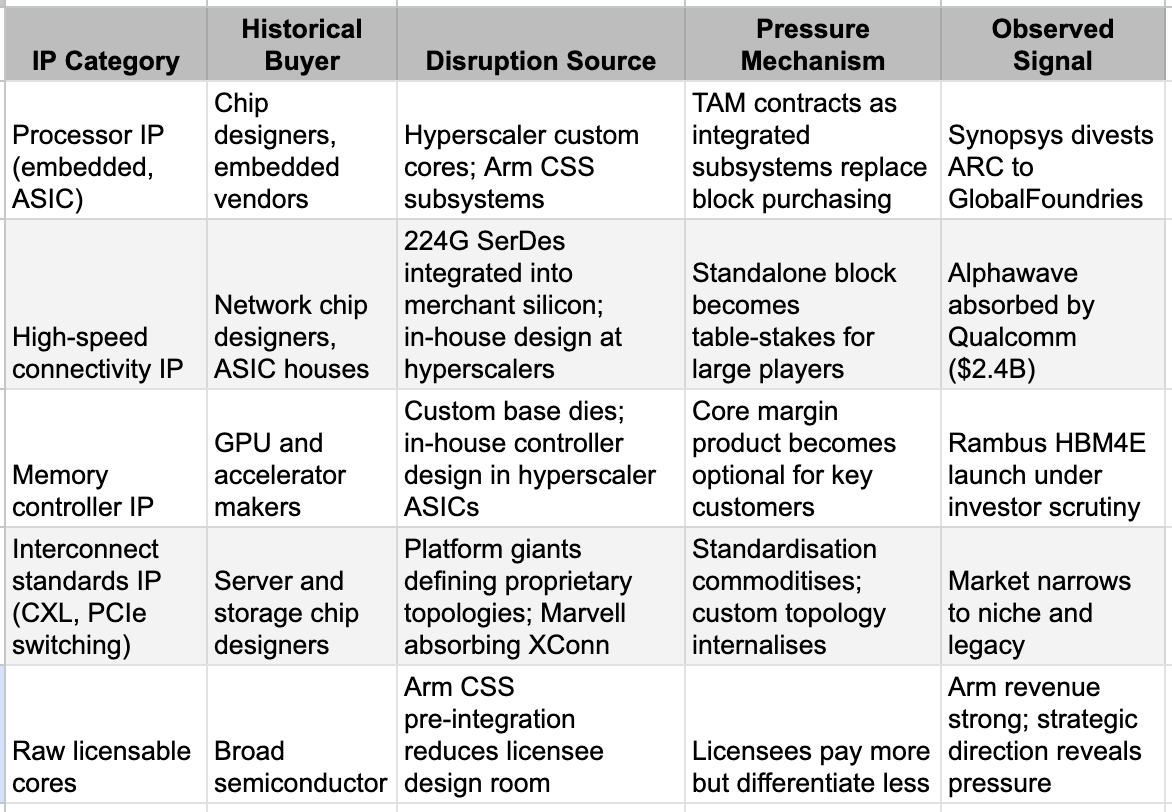

Theme I: The IP Middleware Squeeze

The foundational IP licensor model is to charge a royalty or upfront fee for a processor core, a memory controller, a high-speed SerDes block, or an interconnect IP package. The business rests on one assumption: that the customer cannot or will not design equivalent capability themselves. As we observed in our Real Chip War piece, hyperscalers’ custom silicon divisions are growing and with budgets and ambitions that cannot allow them to remain fixed on narrow logic areas. A hyperscaler building its own inference chip for a specific memory architecture has every reason to design its own memory controller optimised for that exact hierarchy, rather than pay perpetual royalties on a general-purpose block carrying assumptions baked in for someone else's architecture. This is not a capability claim. For a company spending $150–200 billion on AI infrastructure annually, the in-house design cost is trivial relative to the royalty exposure at scale.

The downstream consequences are observable. Synopsys divesting its ARC processor IP business to GlobalFoundries signals that a discrete processor IP product, detached from a broader design system, has become harder to defend than the overall flow surrounding it. Alphawave, technically credible, commercially active in high-speed connectivity IP, being absorbed by Qualcomm signals the same thing one layer down: the standalone connectivity IP company becomes more valuable as a division of a larger silicon architecture than as an independently traded asset. Rambus is the current market test: its HBM4E memory controller IP is technically impressive, but investors are right to ask whether the five largest buyers of such capability will still need it in five years, or will have built it into their own custom base dies. That is not a question about Rambus's execution. It is a question about category survival under conditions no IP licensor created.

Arm is a more nuanced case. Its revenue growth has been robust, and its architectural pervasiveness remains exceptional. But the direction of its strategic response is instructive: offering pre-integrated Compute Subsystems rather than raw IP cores. This is not an upgrade of the product. It is an acknowledgement that the raw IP value proposition is under pressure, and survival requires moving up the stack to capture the integration value that the market would otherwise internalise. Even the strongest IP franchise in hardware is running harder to stay in the same place.

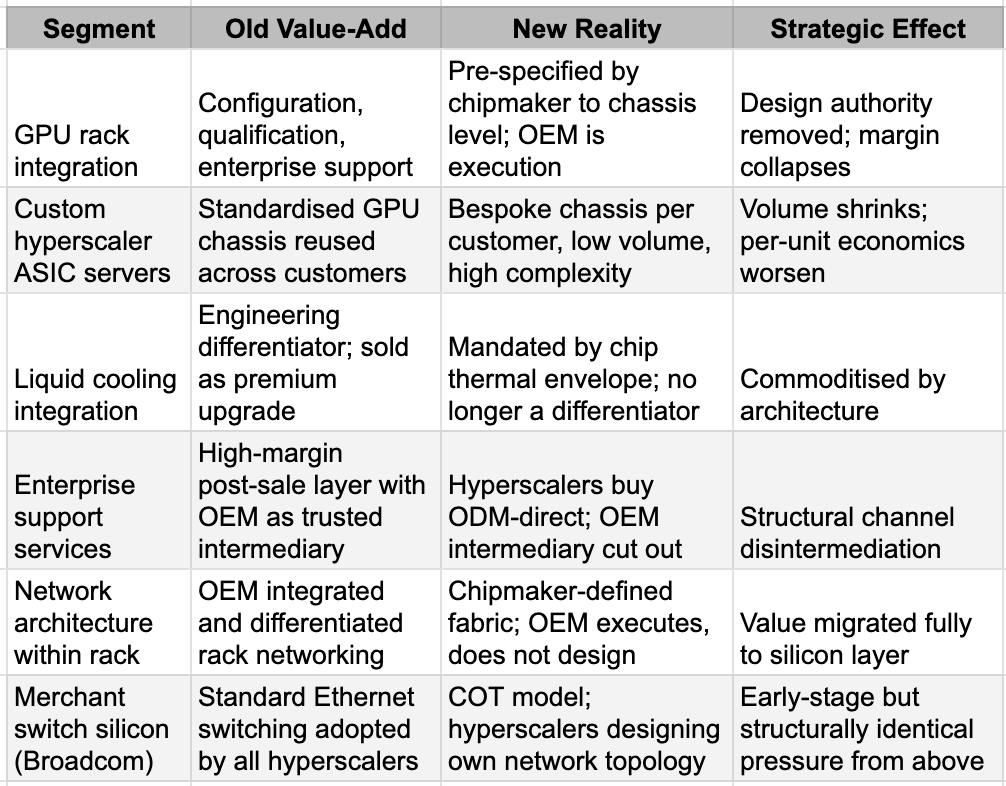

Theme II: The Assembler's Shrinking Perimeter

The traditional server OEM and ODM business model was, in essence, a value-added integration play: qualify third-party silicon, engineer the thermal and power architecture, manage complex supply chains, and provide enterprise support across long product cycles. That model worked when the GPU was the unit of value, and the rack was the OEM's canvas.

Two forces are now compressing this simultaneously. The first is hyperscaler silicon internalisation. When Google's TPU, AWS's Trainium, or Microsoft's Maia displaces a standard Nvidia GPU in a rack, the standardised reference design that gave ODMs their economies of scale disappears. Custom ASIC servers are, by definition, bespoke: low volume, unique per customer, expensive to qualify, and difficult to margin at scale. TrendForce projects custom ASIC AI servers growing from 11% of the AI server market in 2025 to roughly 25% in 2026. Each percentage point of that shift is a percentage point of high-volume, standardised GPU rack orders that the assembler no longer receives. China is running the same dynamic in compressed time: Alibaba's Zhenwu chip, Baidu's Kunlun series, ByteDance's SeedChip, and Tencent's Zixiao are all in-house silicon programmes that remove their creators from the standard ODM customer pool for GPU racks.

The second force is architectural, and it is worth understanding precisely what has happened. A decade ago, Nvidia's commercial interest stopped at the chip and the board. It sold a GPU. The OEM took it from there: designing the server node, the chassis, the cooling architecture, the power delivery system, and the rack. That was the OEM's canvas and it was genuinely valuable engineering work.

By now, at the GB200 NVL72, one sees a different product. It is not a chip or even a server. It is a fully specified, liquid-cooled, 72-GPU rack-scale system in which Nvidia defines the NVLink switch fabric topology, power delivery at up to 120kW per rack, the cooling manifold design, the thermal envelope, and the validated deployment blueprint down to cable routing. The OEM manufacturing this system is executing Nvidia's design, not contributing its own. Foxconn assembling GB200 NVL72 racks is working to Nvidia's specification at every level that matters. Engineering authority has migrated entirely upstream.

The commercial consequences for the likes of Dell, Super Micro, and HPE are structural rather than cyclical. Their value-add in the AI server market was integration: taking best-in-class components and assembling them into a validated, enterprise-grade system. When Nvidia pre-validates the entire system, that integration value disappears. What remains is manufacturing execution, supply chain management, and post-sale support. Super Micro has been living this reality: despite enormous revenue growth from AI server demand, gross margins collapsed from above 11% to barely 6% as the engineering differentiation that once justified premium pricing was absorbed by the chipmaker above it. Hardware still ships. The integrator's margin does not.

Even Broadcom, enormously profitable and dominant in merchant networking silicon, faces a variant of this logic from the other direction. The customer-owned tooling model, where hyperscalers increasingly design their own network chip architecture rather than adopt Broadcom's standard Ethernet switching products, is a distant but structurally identical signal. Broadcom has time and market position. The logic of the threat is the same one it is currently applying to the ODM layer below it.

Assembler and OEM Competitive Perimeter Compression

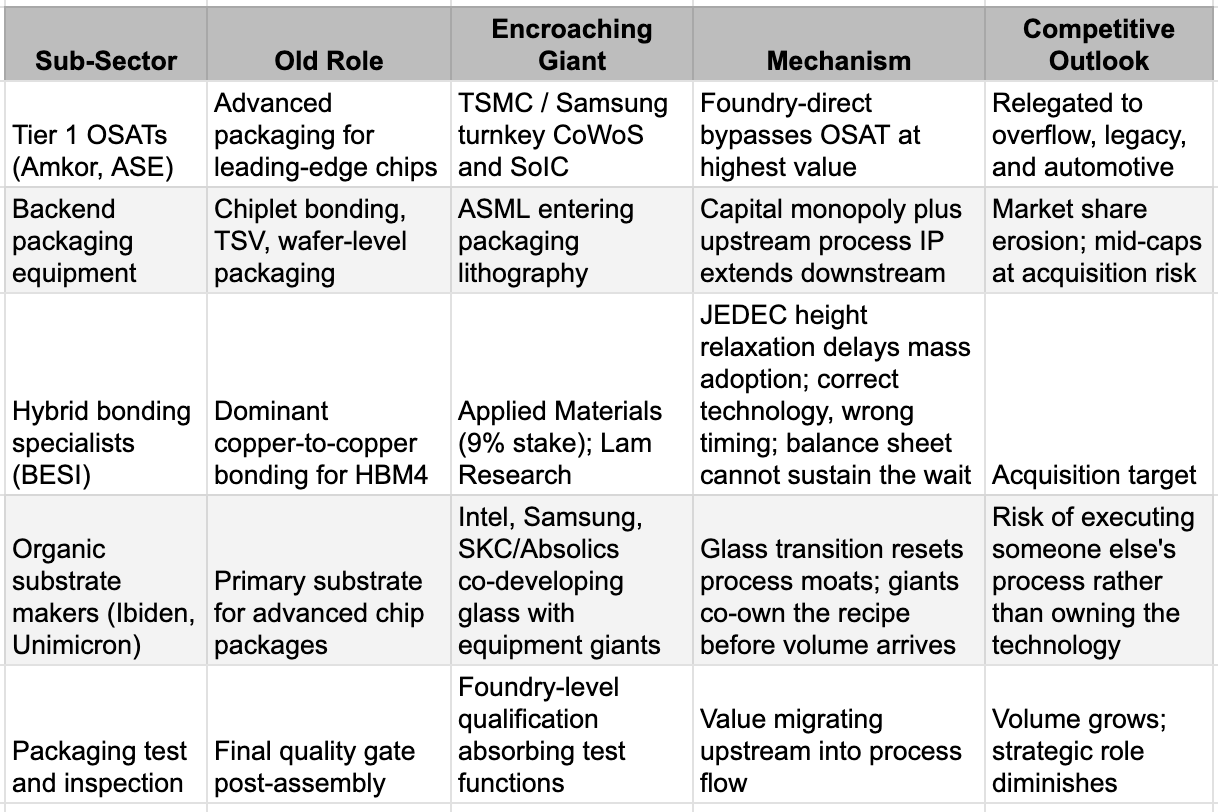

Theme III: Packaging — The Chokepoint That Attracts Predators

Advanced packaging was, until recently, a respected but unglamorous sector. OSATs assembled chips and ran test sequences. The front-end foundry was where the intellectual capital lived. The backend was where the labour lived. As we argued in Chip Design: Hardware's Software, manufacturing layers below chip design are now where the real constraint resides. CoWoS, SoIC, hybrid bonding, and glass substrates are the critical paths of every leading-edge AI chip. Without them, no AI accelerator of consequence ships. This is no longer housekeeping. It is the value centre.

The strategic consequence is predictable: critical chokepoints do not remain in the hands of specialists when giants have the means to internalise them. TSMC is now offering turnkey CoWoS and SoIC packaging directly to hyperscalers, competing with the OSAT providers it once only served as a foundry upstream. Even ASML announced the intention to enter packaging lithography with tools specifically targeting chiplet stacking, through-silicon via formation, and advanced interposer processing. This is not a product line extension. It is a front-end giant, capitalised to a degree no backend equipment company can match, moving into a market that backend specialists have owned for decades.

BESI is the cleanest case study in what happens when a specialist correctly identifies a chokepoint and then discovers that the giants around it can simply rewrite the rules.

BESI built a dominant position in hybrid bonding, the copper-to-copper interconnect technology that was widely considered inevitable for next-generation HBM stacking. The logic was sound: as HBM stacks moved from 12-high to 16-high configurations, the original JEDEC height limit of 720 micrometres made hybrid bonding the only viable path. BESI invested accordingly, polishing its equipment platform in anticipation of mass adoption.

Then JEDEC quietly relaxed the height specification to 775 micrometres for both 12-high and 16-high HBM4 stacks a few days ago for cost reasons. Samsung and SK Hynix, as dominant JEDEC participants, had every incentive to lobby for a standard that preserved their existing tooling. The relaxation gave them that. Hybrid bonding adoption is now deferred to HBM4E at the earliest, and more likely to HBM5, where 20-high stacking may finally make the standard height limit genuinely binding. 2026 was supposed to be a critical year for BESI in business turnaround, and now it is again waiting for a mass-production window that has shifted by at least a few quarters. The deeper irony is that BESI's technology remains strategically important. That strategic importance is precisely why Applied Materials, already holding a 9% stake, and Lam Research are reportedly circling the company. Being too early at a chokepoint and running low on patience is exactly the moment when a larger balance sheet becomes attractive. BESI may be acquired not because it failed but because it was right about the destination while the industry took a detour, and it cannot afford to wait alone.

The organic substrate makers face a different kind of pressure. Almost everyone in the industry is racing toward glass substrates. Glass offers lower warpage, higher interconnect density, and better dimensional stability than organic alternatives. These properties matter increasingly as chiplets and HBM stacks demand ever-flatter, more dimensionally stable foundations. As such a normal broader materials transition, but in the transition, the giants are also expanding their areas of operations and causing more than usual issues for the organic substrate incumbents. Intel has invested $1 billion in a glass substrate R&D line in Arizona; Samsung is coordinating a group-wide effort across its electronics, display, and electro-mechanics divisions; and SKC's Absolics was co-founded with Applied Materials and is building its first production facility in Georgia. Unlike organic substrates, where Ibiden and Unimicron built their moats over decades of proprietary process chemistry, glass is a clean-slate technology. The giants co-developing it with foundries and equipment makers may well own the process recipe by the time volume production arrives, leaving independent substrate makers to execute rather than innovate.

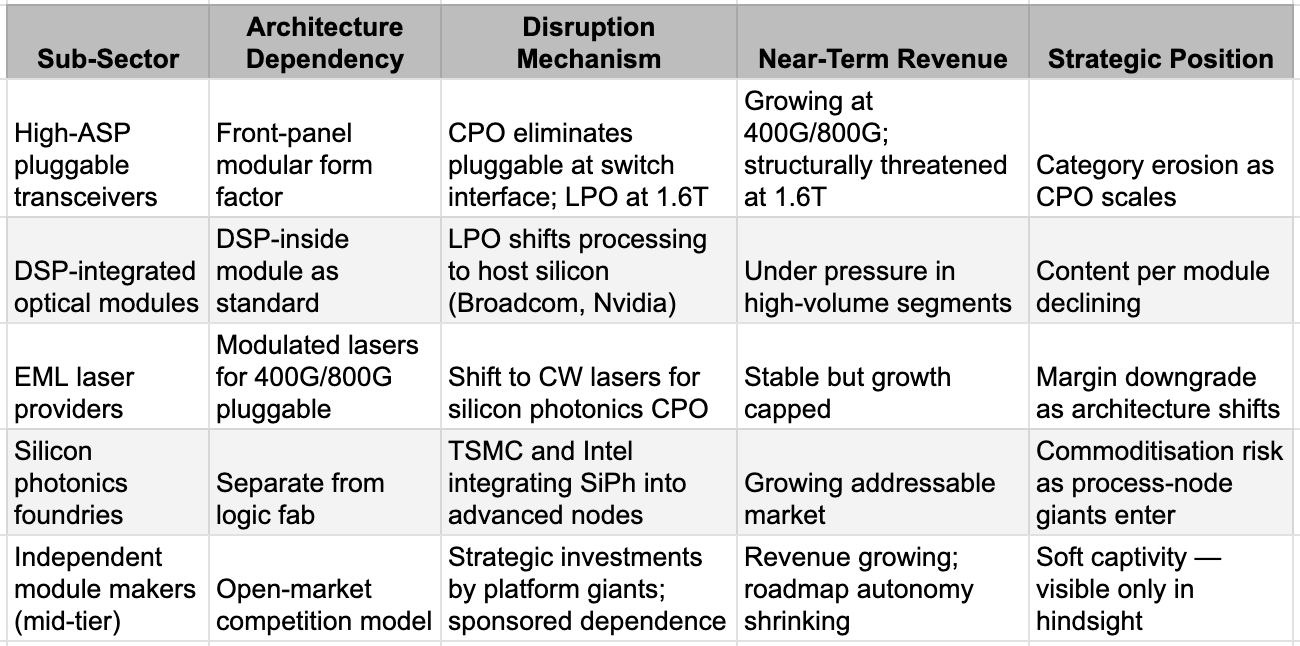

Theme IV: Optical — Booming in Volume, Disrupted in Architecture

This one is such a big topic on its own that we had a whole note on it last week. The consensus trade on optical is that it is a straightforward beneficiary of AI infrastructure growth. The consensus is right about volumes and wrong about value distribution. Total optical intensity in AI data centres is rising steeply. That is not the story. The story is that the architecture through which optical functions are delivered is changing in ways that shift value within the optical stack even as the stack itself expands.

The pluggable transceiver was, for two decades, the optical industry's highest-margin standalone product: a self-contained module managing its own laser and signal processing, replaceable and independently priced as generations advanced. Co-packaged optics moves the optical engine from the front-panel cage onto the switch or accelerator package itself. Linear pluggable optics removes the DSP from inside the module entirely, delegating signal processing to the host silicon. Both transitions compress the logic that justified premium pricing on the pluggable module as an independent value layer. A product whose architecture has been absorbed by the surrounding system is, by definition, a product in structural margin decline even when its volume is rising.

The laser chain illustrates this with precision, as we examined in our optical sector work. Silicon photonics co-packaged architectures favour continuous-wave laser sources over the electro-absorption modulated lasers that commanded premium pricing in the 400G and 800G pluggable era. EML providers built their businesses around that margin. CW laser sources are lower-cost and simpler. They are not a competing product line in the conventional sense, but a technology substitution driven by architectural choice made one or two layers above the laser supplier's control. Revenue grows; margin compresses; the category has been downgraded without anyone explicitly competing against it.

Nvidia's $2 billion each into Lumentum and Coherent could be the sharpest example of what sponsored dependence looks like from the outside. A supplier whose roadmap is co-funded and whose production capacity is committed to a single platform giant has guaranteed near-term revenue and contracted long-term autonomy. The line between customer, investor, and eventual architectural controller is thin. It is worth asking whether the investment is support or the beginning of something else.

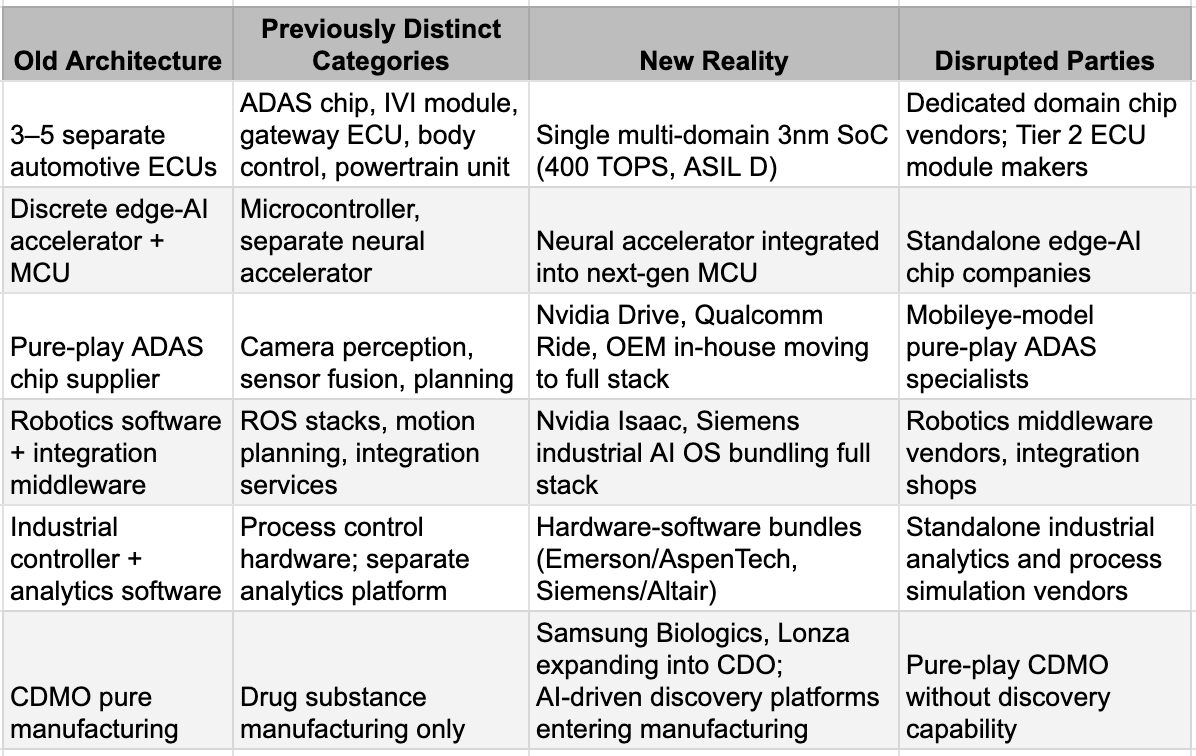

Theme V: Category Fusion — When Sub-Sectors Stop Existing

The most structurally underestimated form of hardware disruption is not one layer encroaching on another. It is an entire sub-sector ceasing to exist as a distinct category because a single chip or platform simultaneously absorbs functions that once required multiple suppliers.

The automotive electronics industry has been defined for thirty years by a distributed architecture: dedicated electronic control units for ADAS, for infotainment, for gateway networking, for body control, for powertrain management. Each ECU was a product line for a distinct Tier 1 or Tier 2 supplier. The ecosystem held because no single device could plausibly run all these functions simultaneously while meeting automotive safety standards. A 3nm multi-domain SoC running ADAS, infotainment, and gateway on one chip with hardware-level isolation and full ASIL D safety compliance changes that calculus. It does not compete with each ECU vendor individually. It makes the question of maintaining three separate vendors for three separate modules look architecturally redundant. This is not incremental competition. It is category fusion, and the disrupted parties are the dedicated domain chip suppliers and the Tier 2 ECU module makers who cannot replicate that level of integration.

Robotics is the newest expression of the same logic. Nvidia's Isaac platform, comprising of Cosmos, GR00T, Omniverse simulation, Jetson edge compute, provides, in a single stack, what a generation of robotics software companies, integration middleware vendors, and simulation tool providers used to sell separately. Hyundai Motor Group recently committed to a $26 billion investment, including a new robotics factory capable of producing 30,000 robots per year. An automotive giant is now also a robot manufacturer, a fleet deployer, and a foundation model customer. The companies that sold factory automation middleware, robot integration services, and standalone motion-planning software are competing with the stack from above and the manufacturing scale from below simultaneously.

In industrial settings broadly, Siemens absorbing Altair, Emerson absorbing AspenTech, and Nvidia's partnership with Siemens on an industrial AI operating system are all expressions of the same force: hardware-software bundles that eliminate the standalone industrial analytics software vendor, the process control specialist, and the industrial simulation company as distinct purchasing decisions. The procurement category collapses into the platform.

China extends this pattern with its own urgency. Huawei's design of the NPU, the interconnect, the server, and the software into a single sovereign stack is the most complete expression of category fusion anywhere.

Tech’s Goliath Inversion

Giants squeezing out the middlemen has been a familiar story in almost every industry — retail, banking, logistics, and of course, asset management. Technology has been different. Its complexity protected the specialists. The innovation generation encouraged the nimble and flexible. The more intricate the system, the more indispensable the focused expert. For most of the industry's history, that held.

On the innovation side, for thirty years, Clayton Christensen's disruption framework governed how technology investors thought about competitive risk: small entrants find overlooked niches, improve relentlessly, and eventually unseat the complacent giant. The pattern was real. The dynamic worked because capital was the insurgent's advantage: cheap, fast, and aimed at underserved markets that incumbents were too profitable to bother with.

What AI infrastructure has done is invert that logic. Complexity no longer protects the specialist. It attracts the giant. The more critical a layer becomes to system performance, the more intolerable it is for the largest players to leave it in someone else's hands. This is the force running through every theme in this piece. The common thread is not that these businesses are failing. It is that the architecture is moving around them, and the entities with the capital and the physics-driven rationale to drive that movement are larger, better-funded, and more purposeful than anything the hardware middle class has faced before.

The evidence for whether this cycle is more severe than historical hardware consolidation waves lies in the simultaneity. In prior cycles, one or two layers of the stack came under pressure at a time: foundries consolidated, then memory, then networking. What is observable in 2025 and 2026 is pressure across IP, packaging, optics, system integration, automotive electronics, robotics middleware, and industrial software simultaneously. Every layer that was once protected by the assumption of modularity is now under pressure from the assumption of integration.

The danger for investors is in the temporal gap between financial performance and strategic trajectory. Unlike many of the themes we have discussed in the last two years, the real financial impact may not be immediate. In fact, for some, the announcements of strategic investments and acquisitions could be seen as another re-rating reason. Many of the sub-sectors described here will continue to report revenue growth for several quarters. Volume is rising across optical, packaging, automotive electronics, and industrial hardware. The problem is that demand can remain strong while strategic importance migrates elsewhere. A business can grow in units, shrink in pricing power, lose architectural relevance, and still appear healthy in a quarterly earnings release. It will take far longer to acknowledge the real impact of the disruptions in hardware than in software. But the force is likely to be relentless. The giants have too much money. More importantly, they now have reasons. And in hardware, reasons are often far more dangerous than resources.