A Full Circle

For my first solo project in engineering school, I went for one in optical communication. It was a hot subject, immensely promising even back then, but one I also found genuinely complicated. When it came time to pick a bigger project, I quietly switched to microelectronics. Silicon wafers were easier. Decades later, it appears life has come full circle.

Optical communication stocks are the flavor of the year in 2026. And with that status has come a wave of simplistic top-down arguments. The narrative runs as follows: 2024 was about GPU shortages. 2025 was about memory and HBM. In the same vein, 2026 is about the optical component shortage. Notwithstanding the large gaps in valuations, these arguments are erroneous at multiple levels.

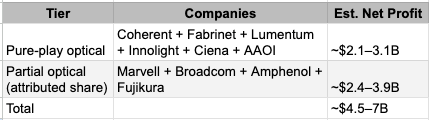

Consider the scale of what is being discussed. The two largest Korean memory companies alone are expected to generate combined operating profits in 2026 approaching $200 billion. Add TSMC or Micron, and the net profit of any three out of the four companies could exceed US$200 billion. That figure is comparable to the combined net profit of every listed company in India. Optical component companies, pure-play ones, will collectively earn something in the range of $2 to $3 billion in net profit this year. That is roughly 1 to 2 percent of the four semiconductor giants.

Total Optical Ecosystem Profit Pool: Rough Aggregate 2026

The scale gap matters because it puts optical's investment proposition in context. But scale alone is not the story. The more important point is this: the optical field is full of rivaling technologies, multiple simultaneous disruptions, and companies stepping on each other's toes in ways that make the narrative of a clean, rising tide deeply misleading. On top of that, the larger semiconductor companies are expanding their silicon capabilities precisely to absorb functions that optical component companies currently own. The laser goes inside the silicon package. The switching moves to photons. The transceiver merges with the ASIC. Every one of these moves is a threat to someone's existing revenue.

This note examines eight technology rivalries that we believe matter most for listed optical companies. It is not a list of reasons to be bearish. It is an attempt to map the terrain honestly, because the stocks in this space deserve analytical honesty more than most. We must warn our casual readers of somewhat technical middle sections, which are more important to those investing directly in some of these names. For the rest, we advocate jumping straight to the conclusion.

One more structural reality before the rivalries. The five largest hyperscalers are no longer passive consumers of optical specifications set by Nvidia or the standards bodies. Google designs its own TPU accelerators and has built an optical circuit-switch topology around them. AWS Trainium and Inferentia run on custom network fabrics specified internally. Meta's MTIA connects through the topologies Meta controls. Microsoft's Maia sits inside an Azure network architecture that Microsoft is actively redesigning. Each of these programmes creates a different optical specification. The absence of a single dominant customer architecture has slowed CPO standardisation because five hyperscalers are each prototyping it differently, and they are not the only ones. For optical component vendors, this is both an opportunity and a complexity. The market is large. The requirements are divergent. A product qualified for one hyperscaler's topology may not transfer cleanly to another's.

The framework runs outside-in: from the scale-out networks connecting racks across a data centre floor, into the scale-up fabrics connecting GPUs within an AI cluster, down to the frontier where optics begins to displace copper at and inside the chip package itself. Within each tier, the same question keeps recurring: can a newer, cleaner technology displace the incumbent before the incumbent improves fast enough to stay relevant?

1. The Protocol War

Think of a protocol as the language two chips use to talk to each other. Before you can debate which laser goes inside the transceiver, or whether copper survives another generation in the rack, there is a more fundamental question: which language are these devices even speaking?

Three protocol wars are running simultaneously, at three different distances.

Inside the AI cluster, GPUs talk to each other using either InfiniBand or Ethernet. InfiniBand is Nvidia's language. It is fast, reliable, purpose-built for AI training, and exclusively sold by Nvidia. Every switch and cable in an InfiniBand cluster carries Nvidia's margin. The hyperscalers, who are spending tens of billions on AI infrastructure, find this expensive. Their alternative is RoCE, which is the same performance ideas running on open Ethernet infrastructure, using switches from Arista and chip designs from Broadcom. Meta, Google, and AWS have all built large AI clusters on Ethernet rather than InfiniBand. The Ultra Ethernet Consortium, backed by AMD, Intel, Broadcom, and Arista, is standardising the next generation specifically to make InfiniBand substitutable. This is not an abstract standards debate. If Ethernet wins at scale, it is sustained revenue growth for Arista and Broadcom. If InfiniBand holds, Nvidia's networking segment, already a multi-billion dollar business, compounds further.

Between servers, the dominant language is PCIe. The next version, PCIe Gen 6, doubles the bandwidth of Gen 5. This is where Astera Labs lives: its retimer chips extend PCIe signals across longer distances than the standard allows. That said, every major AI chip vendor has a proprietary interconnect that outperforms PCIe within their own ecosystem, and the question is whether PCIe/CXL can close that performance gap fast enough to remain relevant as AI clusters consolidate around fewer, tighter proprietary fabrics.

CXL, which runs on top of PCIe, adds the ability for servers to share memory pools across machines, which is potentially transformative for AI workloads that are memory-bandwidth constrained. Whether CXL's memory pooling ambitions are realised depends partly on whether optical can extend PCIe reach across racks economically, which loops back directly into Rivalry 8 discussed below.

PCI’s real rival, however, is Nvidia’s NVLink . The proprietary protocol living within an Nvidia GPU cluster is faster and more bandwidth-dense than PCIe. For scale-up inside an Nvidia rack, NVLink is not competing with PCIe; it has already won. PCIe is what everything else uses.

Further out, connecting clusters across a campus or between data centre buildings, the debate is between proprietary hyperscaler fabrics (Google's Jupiter, Meta's Express Backbone, AWS's custom designs) and standards-based approaches. Each hyperscaler has effectively written its own specification, which is why there is no single agreed standard for CPO connectors, no single agreed timeline for OCS adoption, and no single optical vendor that can serve all five hyperscalers with the same product design. This fragmentation is the hidden structural tax on every optical company's R&D budget.

The protocol layer matters for this report because it sets the demand environment within which every hardware rivalry plays out. A world where Ethernet wins scale-out broadly is a world with more standardised optical transceivers and more Arista switches. A world where each hyperscaler runs a proprietary fabric is a world of bespoke optical qualifications and compressed margins for vendors who have to certify the same product five times over.

SCALE-OUT

Rack to rack across the data centre floor. The world of pluggable transceivers, optical switches, and the cables that link thousands of servers.

2. EML Lasers vs Silicon Photonics with CW Lasers

This is the most commercially urgent rivalry in the optical world that could affect the margin structure of the two most discussed pure-play optical stocks: Lumentum and Coherent.

For the past decade, the dominant laser inside every optical transceiver has been the Electro-Absorption Modulated Laser, or EML. It is an indium phosphide chip that does two things simultaneously: generates the light and modulates it to encode data. EMLs are compact, proven, and perform well at the 100G-per-wavelength speeds that current 400G and 800G transceivers require. They are also expensive. Indium phosphide is a difficult compound semiconductor to grow. Yields are lower than silicon, wafers are smaller, and the process is less automated. Lumentum and Coherent have built substantial businesses supplying EML chips to transceiver makers, and they price accordingly, which is an understatement for the shortages-driven 2026.

Silicon photonics takes a fundamentally different approach. It builds the optical routing and modulation functions on standard silicon, the same material as every other chip, using existing semiconductor fabs. This makes it dramatically cheaper to scale. The catch is that silicon cannot generate light. So a silicon photonics transceiver still needs a laser, but a much simpler one. Instead of a complex EML that modulates as it emits, a silicon photonics design uses a Continuous Wave laser, a device that simply shines steady light. All the modulation happens on the silicon chip downstream. CW lasers are simpler and cheaper to make, and are available from a wider range of suppliers.

The commercial consequence is significant, notwithstanding what many enthusiasts may want to dismiss. As silicon photonics penetrates the transceiver market, it does not eliminate the laser business. It replaces a high-value, complex EML with a lower-value, simpler CW laser. The revenue from one CW laser unit is meaningfully lower than from one EML. Even if total laser volume grows as transceiver shipments rise, the margin per unit compresses. This is not a cliff. It is a slope. But it is a real slope that is already beginning. At 1.6T speeds, silicon photonics already accounts for an estimated 20 to 30 percent of new module designs and is rising. The next generation, 3.2T, is expected to be predominantly silicon photonics.

The optimists say Lumentum and Coherent adapt by selling more CW lasers at higher volumes and by supplying into co-packaged optics where their laser expertise still matters. The bears note that more suppliers can make CW lasers, that pricing power therefore erodes, and that the companies that truly win the silicon photonics transition are the foundries, Tower Semiconductor and TSMC, not the laser makers. The delay risks for the silicon photonics acceleration are real: coupling a CW laser efficiently onto a silicon chip is technically difficult, indium phosphide supply constraints protect EML pricing in the near term, and 200G-per-lane EML performance still leads what silicon modulators can match at the frontier.

3. DSP-Based Pluggables vs Linear Pluggable Optics (LPO)

Every pluggable transceiver shipped today contains a Digital Signal Processor. This is popularly explained as the spell-checker inside the module. The electrical signal coming from the switch gets cleaned up, errors get corrected, and only then is it converted to light. On the receive side, the process reverses. DSPs add reliability and reach. They also add cost, latency, and, critically, power. A modern 800G pluggable module consumes roughly 12 to 15 watts, largely because of the DSP.

Linear Pluggable Optics removes the DSP from the transceiver entirely. The signal runs in a more analogue fashion, and signal processing is pushed back to the host switch ASICs. The transceiver becomes simpler and cheaper. Power drops by 30 to 40 percent. This matters enormously in a data centre with hundreds of thousands of ports. Meta has tested LPO. Google has championed it. Arista's founder has publicly argued that LPO can match co-packaged optics on power efficiency at 1.6T, which, if true, delays the need for the far more disruptive architecture change described in Rivalry 4 below.

The disruption here is not primarily to optical hardware companies. It is to the DSP chip vendors. Broadcom sells a meaningful volume of optical DSP chips into the transceiver market. Marvell, which acquired Inphi specifically for its optical DSP portfolio, has built its data centre revenue partly on this business. LPO does not eliminate Broadcom or Marvell from the equation; in many cases, it shifts the processing to their own ASICs, but it reduces per-port revenue from the discrete DSP chip. The main delay is technological and operational: LPO places an immense electrical engineering burden on the switchboard. Pushing the analog signal without a DSP requires hyper-premium PCB materials and flawless trace routing, making enterprise interoperability a hurdle.LPO works best when the hyperscaler controls the entire link end-to-end. Enterprise buyers, who expect multi-vendor interoperability, are slower to adopt it. Broad hyperscale deployment at 800G is active now, with 1.6T LPO specifications being finalised.

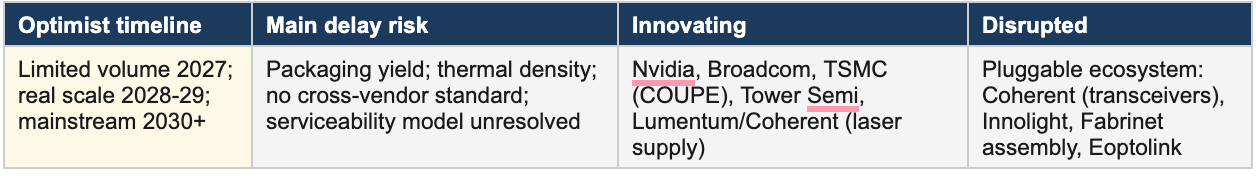

4. Pluggable Transceivers vs Co-Packaged Optics (CPO)

This is the rivalry every optical investor has heard about. It is also the one most misunderstood in terms of timing and mechanism.

Today, optical transceivers are hot-swappable modules that plug into the front panel of a switch. You can replace them without touching anything else. The industry has been perfecting this for fifteen years. Pluggable transceivers are the single largest revenue segment in the optical components market. Coherent, Innolight, Eoptolink, and Fabrinet (as a contract manufacturer) all rely heavily on it.

Co-packaged optics integrate the optical engine directly into, or adjacent to, the switch chip package, eliminating the long electrical trace between the ASIC and the transceiver. At today's speeds, this electrical trace is already a significant source of signal degradation and power loss. At 1.6T and beyond, running a high-speed electrical signal any meaningful distance becomes increasingly difficult. CPO fixes this at the source. Power savings are estimated at 30 to 50 percent per port. NVIDIA's Quantum-X switch, Broadcom's Bailly platform, and TSMC's COUPE photonic integration roadmap are all CPO designs. Scale-out CPO platforms like Broadcom's Bailly are explicitly designed to replace front-panel pluggables but are currently shipping in trial volumes too small to materially cannibalise an industry measured in billions of annual transceiver revenue.

Effectively, CPO's first deployments are not replacing pluggable fibre modules. They are replacing copper connections inside AI scale-up nodes, specifically the copper cables connecting GPUs to switches within a rack. That is a different market from the pluggable transceiver market serving fibre between racks. The initial CPO ramp is therefore additive to the total optical market, not immediately cannibalistic. The disruption of scale-out pluggables is real, but it is a 2028 to 2030 story.

CPO has been three years away for almost a decade. The 2018 roadmaps targeted 2021. The 2021 roadmaps targeted 2024. The industry consensus has now shifted to 2027 or 2028 for limited volume and 2029 to 2030 for real scale. The delay drivers are consistently the same: packaging yield is extremely difficult, thermal management is unsolved, and serviceability remains a genuine concern. If the optical engine fails, replacing it means disassembling a switch that costs hundreds of thousands of dollars.

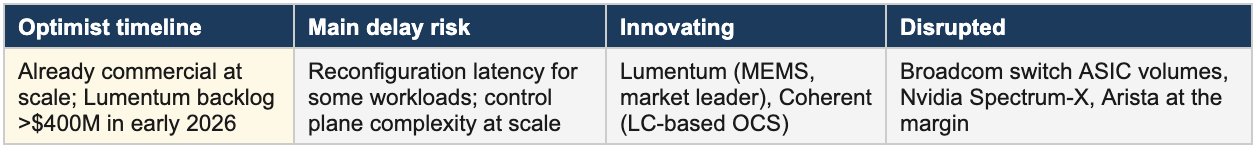

5. Electronic Packet Switching vs Optical Circuit Switching (OCS)

This rivalry is different in character. It is not about replacing copper with light. It is about whether light can take over a function that electronics has owned completely: switching data traffic inside the data centre fabric.

A conventional data centre uses electronic switches. Arista, Cisco, and Nvidia supply these switches. Broadcom designs the switch ASICs inside them. An Optical Circuit Switch uses tiny mirrors or liquid crystal cells to physically redirect beams of light without converting them to electricity. The OCS does not read the content of the data. It simply opens and closes optical paths, like a railway junction for photons. Because it never converts the signal, it is faster and dramatically more power-efficient for high-throughput, predictable traffic patterns. Google has deployed OCS at scale across the majority of its data centres. Meta has followed. The use case is reconfiguring network topology to match AI training job patterns.

Lumentum is the clear market leader in OCS, with its backlog exceeding $4500 million as of early 2026 and the total OCS market being revised upward from an earlier estimate of $500 million to over $1.5 billion for 2026. Coherent is positioned as the secondary player. This is genuinely new revenue for both companies, not substituting one product for another, but expanding into a role that did not commercially exist for them three years ago.

The disruption target is real but bounded. OCS reduces the number of times data makes an electrical-to-optical-to-electrical conversion across the network, which means fewer switch hops and potentially fewer switch ports. Arista's moat is its network operating software, not the physical switching hardware, which provides some insulation. The more exposed parties are electronic switching ASIC volumes at Broadcom and Nvidia's Spectrum-X networking division. OCS cannot replace packet switching entirely, but it can eliminate interior switching layers, and each eliminated layer is a switch and a set of pluggables that someone does not buy.

SCALE-UP

GPU to GPU within the AI cluster. Short distances, brutal requirements. Copper has held on here far longer than optics advocates predicted.

6. Copper Scale-Up Cables vs Active Optical Cables

Inside an AI training cluster, thousands of GPUs communicate at extreme speeds and minimal latency. Today, Nvidia's NVLink and NVSwitch system runs primarily on copper. Direct-attach copper cables and active copper cables connect GPUs to switches for distances up to two or three metres. Copper is cheap, latency is predictable, and power consumption per bit is genuinely lower than optical at these short distances. Amphenol, TE Connectivity, and Molex supply most of this connectivity.

Active optical cables embed tiny transceivers at each end of a fibre, converting electrical to optical and back. They are lighter, handle longer distances, and their bandwidth does not degrade with length the way copper does. At distances beyond two or three metres, which start to matter when AI clusters scale to hundreds of thousands of GPUs across a physical facility, copper struggles to maintain signal integrity at 800G-plus speeds.

Nvidia has been explicit about its preference. It has publicly stated that it is going to extreme lengths to avoid going to optics in scale-up and does not expect to cross that threshold for most applications until 2027 or 2028 at the earliest. The reasoning is economic. An optical cable for this application costs several times more than a copper equivalent, and at 100,000-GPU scale that difference translates to hundreds of millions of dollars of incremental infrastructure cost per deployment. Broadcom's commentary has been similar. Better active copper cables with improved electronic equalisation keep buying one more generation, which is the main reason the optical crossover date has repeatedly slipped.

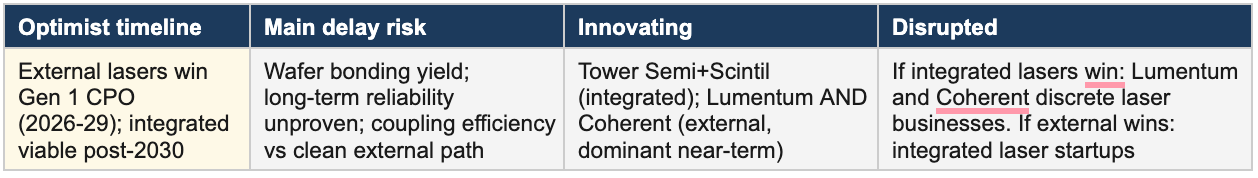

7. External Laser Sources vs Integrated Lasers inside CPO

This rivalry is nested inside the CPO debate but deserves separate attention because it determines which companies capture value within the CPO architecture as it matures.

Co-packaged optics places the optical engine right next to the switch chip. The problem is heat. Lasers are temperature-sensitive. A shift of ten degrees Celsius can alter their wavelength, reduce their efficiency, and shorten their lifespan. The switch ASIC next to them runs well above what a precision laser tolerates. Someone has to solve this.

Two schools of thought have emerged. The External Laser Source approach physically separates the laser from the hot package. The laser lives in a cooler, accessible location and light is piped into the optical engine via a short fibre. It can be serviced or replaced without disturbing the switch ASIC. Lumentum and Coherent both have external laser source product lines and have positioned this as the practical solution for first-generation CPO deployments. Nvidia's Quantum-X CPO design explicitly uses an external laser source for exactly this reason.

The competing approach pushes toward integrated lasers, bonding III-V semiconductor material directly onto the silicon photonic chip using heterogeneous integration. Tower Semiconductor's collaboration with Scintil Photonics is an early commercial move in this direction. If integrated lasers work at scale, the laser becomes part of the photonic chip, eliminating the external fibre connection. For Lumentum and Coherent, the external laser path clearly preserves a discrete, high-value product. Integrated lasers reduce their role to supplying epitaxial material into a chip they do not design or control, a far less defensible position. Most observers expect external laser sources to dominate first-generation CPO deployments, with integrated lasers becoming competitive somewhere in the 2030 to 2032 timeframe.

NEAR-PACKAGE AND IN-CHIP

The frontier where optics begins to displace copper at and inside the chip package. Most of this is 2028 and beyond. The architecture bets placed today will determine who benefits.

8. Electrical Retimers vs Optical I/O Chiplets

SerDes and retimer chips are the high-speed electronic circuits that shoot data off a chip and receive it from other chips across a circuit board or cable. Retimers boost and clean the signal partway along the copper path, allowing it to travel further before degrading. Astera Labs, Marvell, and Broadcom build substantial businesses supplying these chips for PCIe and CXL connectivity in AI server platforms. Astera’s revenue depends on copper-based connectivity remaining the dominant way to connect AI accelerator chips to memory and to the network.

Optical I/O chiplets represent the eventual structural threat. Instead of a retimer boosting a copper signal, an optical chiplet converts the signal to light right at the chip package boundary. The light travels with essentially no degradation over distances that would be impossible for copper. Ayar Labs has demonstrated UCIe-standard optical chiplets that integrate into a chip package alongside processor dies. Lightmatter's Passage platform goes further, proposing a photonic interposer that replaces the silicon interposer currently used in multi-chip packages with one that carries data optically.

The commercial timeline is genuinely further out than the other rivalries in this report. Even the optimists at Ayar Labs and Lightmatter acknowledge that mainstream deployment in AI accelerator packages is a 2028 to 2030 story. The physics is proven. The challenge is manufacturing at the precision required, testing at scale, and proving years of reliability. Companies like Astera Labs, therefore, face this as a long-dated structural risk rather than an immediate threat. Its near-term revenue from PCIe Gen 5 and Gen 6 retimers is tied to a demand wave that continues regardless of optical I/O progress. The more immediate pressure comes from PCIe-over-optics at the rack boundary, which a PCI-SIG working group is actively standardising, and which could reduce retimer attach rates from 2027 onwards.

The Same Three Obstacles, Every Time

Across all seven hardware rivalries, the same three obstacles repeatedly decide whether cannibalisation happens in two or three years or in eight or ten.

Reliability at scale is the hardest gate. A technology can work in a lab, in a pilot, even in a limited deployment. It cannot cross into mainstream until it works reliably for years, across tens of thousands of units, with predictable failure modes that a data centre operations team knows how to handle. CPO has been passing the first two tests for several years. The third test is what keeps pushing the date out.

Serviceability changes the economics of everything. Pluggable transceivers survive in part because replacing one takes thirty seconds and does not require touching anything else. CPO and near-package optics challenge this model fundamentally. Any design that requires fibre work inside a dense chassis will face resistance regardless of its technical merits.

Hyperscaler commitment converts roadmaps into timestamps. The reliable leading indicator for an architecture shift is not a paper or a prototype. It is a multi-year purchase commitment from a hyperscaler that has validated the technology, the supply chain, and the operational model. Lumentum's OCS backlog is that signal for optical circuit switching at present. When equivalent commitment-level signals appear for other rivalries, the relevant timelines will compress quickly.

How the Rivalries Land on Select Listed Stocks

Twelve More Rivalries Worth Knowing

The seven rivalries above determine the near-term fates of most listed optical stocks. The twelve below are real and active. Several will matter enormously by 2030. None is commercially decisive in the next two or three years.

The Conversation Always Runs Ahead

In technology, and certainly in optical interconnect, the conversation has always been more about what lies ahead than what exists today. The gap between what is being planned and what is actually implementable on a given schedule is a continuous feature of the field, not an exception. The rivalries described in this note are genuine. They are not imagined threats invented by analysts. But the fact that a competing technology is being seriously pursued does not mean it will arrive on the aggressive timeline that its most optimistic advocates believe in. CPO's story is the clearest example. Serious people have been seriously working on it for over a decade. It is still not here at scale.

What is striking about the optical sector in 2026 is that, despite this well-documented history of slippage, almost everyone in their respective corners is reaching the most aggressive conclusions available to them. Those in the existing businesses, whether pluggable transceivers or EML lasers or copper connectivity, continue to argue that the competing or displacing technologies will not emerge in time to threaten their current volumes and margins. Those on the disruption side make exactly the opposite assumptions, projecting rapid adoption curves and market share gains on schedules that have, in this industry, rarely been met.

Both groups of companies often trade at extremely high multiples. This is notable because semiconductor companies with comparable or even higher growth rates and considerably more earnings certainty frequently trade at significantly lower multiples. The optical premium reflects excitement about the technology and about the scale of AI infrastructure investment. It also necessarily reflects a set of optimistic assumptions about which technologies win, how quickly, and what happens to the margins of today's incumbents in the transition. Some of those assumptions will prove correct. Others will not. The field is too contested, and the history of ambitious timelines is too consistent, to assume otherwise.

All that said, this is a space facing immense shortages, and there are definitely ways to invest without excessive disruption risk. The near-term demand is real. One simply needs to study harder than the top-down narrative requires. In optical, unlike in most hardware sectors, strong revenue growth and genuine product-level uncertainty coexist in the same stock at the same time.