All three of our most recent themes continue to witness rapid event developments. The first is AI's reach into politics and economics. This week, a Korean politician proposed that society at large share in the windfall profits accruing to Samsung Electronics and SK Hynix. That specific proposal will likely go nowhere. Many similar ones, from economists and politicians across every society, will not. The second is the rising capability of models and the cybersecurity burden that makes AI a need at any price, and not just a want, you debate whether to desire or not. Days ago, Google's threat intelligence team disclosed what it believes is the first zero-day exploit developed using AI, a two-factor authentication bypass caught before its planned mass deployment. The next round will not be intercepted as easily.

The third, and the subject of this piece, is that AI is an unusually expensive and inflationary technology. Prices of almost everything in the AI stack are rising rapidly. The silicon shock is the visible half. The other half is the strange new pricing behavior emerging from software companies. Their industry dynamics differ sharply from those of the chip and model makers, and their pricing decisions, taken right now, are likely to play out very differently as a result.

The Hockey Stick Everyone Is Positioning For

Enterprise AI spending is on a vertical trajectory and is widely expected to remain on one. The story is no longer a forecast. It is something CIOs, vendors, and analysts now treat as a near certainty.

As has been well-flagged, hyperscaler capital spending will clear USD 725bn this year across the four largest US providers alone, with the broader infrastructure envelope approaching the trillion-dollar mark once everyone else is included. That is roughly double the prior year. Cloud backlogs at the leading hyperscalers have ballooned to record multiples of their previous levels, reflecting customer commitments that did not exist twelve months ago. 40% of enterprise applications are expected to feature task-specific AI agents by the end of 2026, up from a low single-digit base a year and a half earlier. Whatever the precise final numbers, the curve points sharply upward, and almost everyone in the technology supply chain is positioning to capture some of what flows down it.

The acceleration in the past six months feels different from earlier waves. Before generative coding tools matured into widely used agents, most enterprises were debating and piloting. Now they are building. The volume of agent-related contracts, projects, and integrations inside large organizations has shifted from quarterly experiments to weekly deployments. Regulated industries are putting agents into production, not just sandboxes. Every major enterprise software vendor has launched some flavor of an agent platform in the past 4 quarters. Hardware vendors have repriced their roadmaps around the assumption of sustained agent workloads. Cloud providers are designing their next-generation services around the assumption that agents will be the dominant API traffic.

The bull case for enterprise software inside this narrative is straightforward. Every dollar of agentic activity has to flow through some application, some workflow, some data store, some governance layer. Whoever owns the layer collects something on the flow. The narrative has produced enthusiastic valuations for vendors who claim a position somewhere in the agent stack. It has also produced an unmistakable scramble to grab a position before someone else does.

That scramble is the part of the story worth examining closely, because it is not playing out the way the bull case assumed.

Who Needs OPEC?! New Tollbooths Everywhere

Textbooks tell us that competitive providers adjust prices endlessly until the marginal business returns approach the cost of capital of the leading, most efficient providers. If leading providers want to make more, they must cartelize behind closed curtains and fix prices at elevated levels, without the fear of rivals disturbing the balance. If only the world were that simple.

Software vendors have begun charging customers for letting AI agents access the data and workflows that the customer already pays to use. Not for new functionality, in most cases. For permission to use existing functionality in a new way, aka through agents.

Enterprise software has always been a layered business. Customer data sits inside containerized applications by historical design. Customer relationships in one vendor's database, financial records in another, support tickets in a third. The containerization was never accidental. It was the architectural premise of the entire SaaS era. Each container had its own interface, its own access controls, and its own pricing model, apart from its own specialized applications, based on the number of human users clicking through that interface.

Agents change that assumption. An agent does not click. A human employee might interact with a piece of software fifty times a day. An autonomous agent, working a complex supply-chain task, might issue thousands of API calls across four different systems in a few seconds. The traditional per-seat model was built for human speeds. When the same infrastructure begins absorbing machine-speed traffic from users that were never licensed, the case for some new pricing layer is genuine. The vendors have responded by installing metered checkpoints between their containers and the agents trying to talk to them.

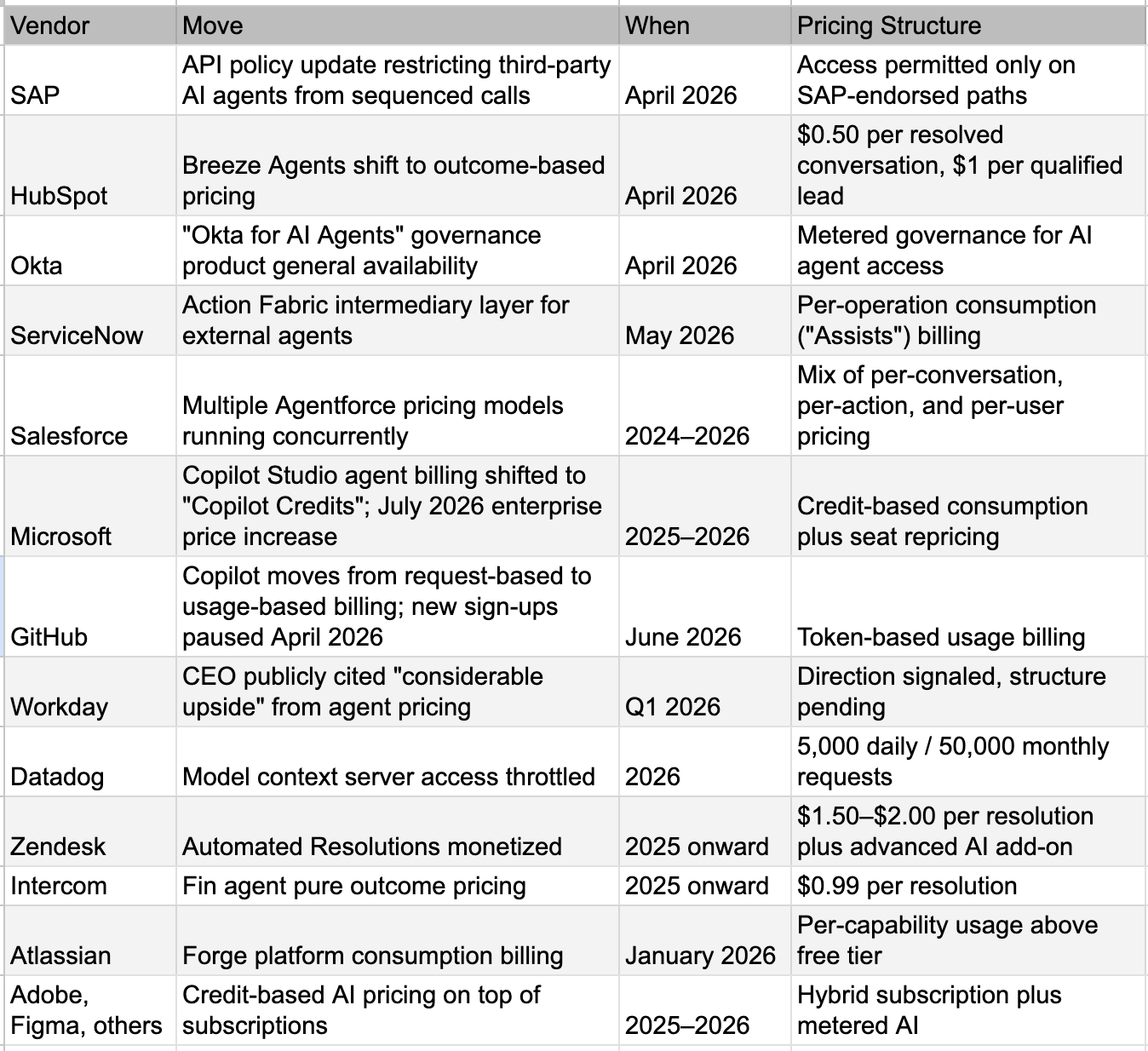

The recent wave of announcements makes the pattern visible.

What stands out is not any single move. It is the convergence. A dozen significant adjustments from a partial list in a handful of months. Salesforce alone now operates several concurrent pricing models for the same product, less by design than because the market has not yet agreed on what a single unit of agent value should cost.

Cost-Push Clearly a Factor

The clearest illustration of why this pricing wave is happening at all sits inside GitHub Copilot. Internal documents leaked in April revealed that the weekly cost of running Copilot had nearly doubled in the first months of 2026. GitHub paused new sign-ups for individual paid tiers in April and announced a move from request-based to usage-based billing starting June 1, 2026. A simple subscription that used to cost USD 10 or USD 39 a month became, for some users, a bill running into hundreds. The pricing change was not a strategy chosen from a position of strength. It was a response to an underlying cost base that had run away from the existing revenue model.

The vendors describe these moves as governance offerings. They explain that agents need authorization, scoped permissions, audit trails, and protection against the rate at which a machine, unlike a human, can hammer an API. All of that is genuinely true. Agents do create new operational challenges, and the engineering work involved in adapting to them is real.

It is also worth being clear about what is being sold. The tools that handle authorization, role-based permissions, identity management, single sign-on, and API rate limiting have existed inside enterprise stacks for two or three decades. The agent era has increased their utility, and some have needed reworking for machine-speed traffic. But the underlying capability is not new. What has changed is the willingness, and the necessity, to charge for it.

The pricing also tends to track the value of the underlying data rather than the marginal cost of providing access. ServiceNow's metering captures a slice of every workflow an agent runs through it. SAP's restrictions push every authorized agent through a path that benefits SAP. Salesforce's per-action and per-conversation pricing scales with the value the agent extracts, not with the cost of the API call.

Customer grievances are rising. Two phrases keep appearing in customer commentary on these moves. The first is paying twice for the same data. The second is paying to access our own information. The framing is unusual for enterprise procurement discussions. Customers are not arguing primarily about cost. They are arguing about principle. Principle arguments tend to travel further than cost arguments, and they tend to attract regulatory attention faster.

There is also an operational concern that finance teams are starting to flag. Agents are non-deterministic. They fail, they retry, they sometimes loop. Under consumption pricing, every retry consumes billable credits. A malfunctioning agent left running over a weekend can produce a bill that no one signed off on. The exposure is unbounded by design. Finance teams have credible reason to worry about pricing models that meter what a machine does, when the machine sometimes does the same thing thousands of times in a row by accident.

Not every new pricing model is the same. HubSpot charging only when an agent actually resolves a conversation, or Zendesk charging only for successful automated resolutions, are different propositions from pure transit metering. Outcome-aligned pricing, where the unit of value is legible to the buyer, may find more durable acceptance than pricing built on machine activity that the buyer cannot easily evaluate. The strongest version of the thesis is not that all usage pricing is a tollbooth. It is that pricing that charges for machine transit without a clear, buyer-trusted value unit will face resistance.

What sets this moment apart is not the addition of new value. It is the addition of new checkpoints around existing value, in many cases. The new revenue line is permission revenue. Permission revenue resembles a rent more than it resembles a service. Customers and regulators have long-established intuitions about rents.

Why This Is the Move Available Now

The vendors are not in a comfortable place. The instinct to install new tollbooths is best understood as a rational response to genuinely difficult conditions, not as a strategy chosen from a wide menu of attractive options.

The cost base is moving against them. Generative AI features have begun to reshape software unit economics in a way that nothing in the prior decade did. Inference costs, evaluation engineering, additional observability infrastructure, and the rising bill for the underlying model APIs are all consuming a meaningful share of revenue that did not exist eighteen months ago. The GitHub Copilot story is the public version of a problem nearly every AI-feature-shipping vendor is facing privately. Margins that used to sit comfortably in the high seventies are drifting toward levels that pure-play software businesses have not traditionally accepted. Software vendors are not raising prices because they want to. They are raising prices because their own cost-push is now real, immediate, and visible in their quarterly numbers.

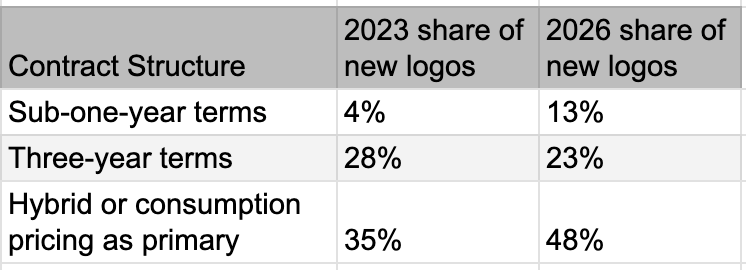

Demand-side economics are shifting at the same time. Per-seat pricing softens when one agent does the work of ten employees. Net new revenue slows when AI compresses headcount growth at the customer. Customers are also signing shorter contracts because they expect the landscape to reshape every six months.

Against that backdrop, the tollbooth is one of the few obvious moves on the table. Build a new revenue line, reasonably fast, that does not depend on seat growth. Bill the same customer for new categories of activity. Hope the booth holds long enough to bridge the company across the transition. From inside a software company facing tougher quarters and watchful investors, the strategy is reasonable. It is what one would expect any management team to try.

The catch is that the move works only if no one else makes it at the same time. The minute most vendors install tollbooths in parallel, the booths start competing with each other and with the customer's other options. And the agentic shift has set off the most unusual price wave the enterprise technology stack has seen. Cost-push from silicon. Cost-push from model providers. Cost-push from infrastructure. Cost-push from each layer of application software now adding its own metered access fees on top. The same enterprise CTO is suddenly being asked to absorb simultaneous price increases from every vendor in the chain. The tremor is real, and it is being felt across the customer base in a way that no single previous price hike ever was.

The First New Competition: Every Product Against Every Other Product

Historically, category boundaries provided commercial moats. SAP governed enterprise resources, ServiceNow managed workflows, and Salesforce owned customer relationships. Each had its own buyer, its own budget line, and its own competitive set.

Today, those boundaries have collapsed. Every major platform is simultaneously deploying an agentic orchestration layer that wants to be the place where enterprise data flows through. The nomenclature varies. ServiceNow has Action Fabric. Salesforce has Agentforce. Microsoft has Copilot Studio. SAP has Joule. Snowflake has Cortex. Databricks has Mosaic. Five years ago, none of these companies competed with each other in any serious way. Today, they are all selling versions of the same idea, all chasing the same dollar inside the same enterprise IT budget.

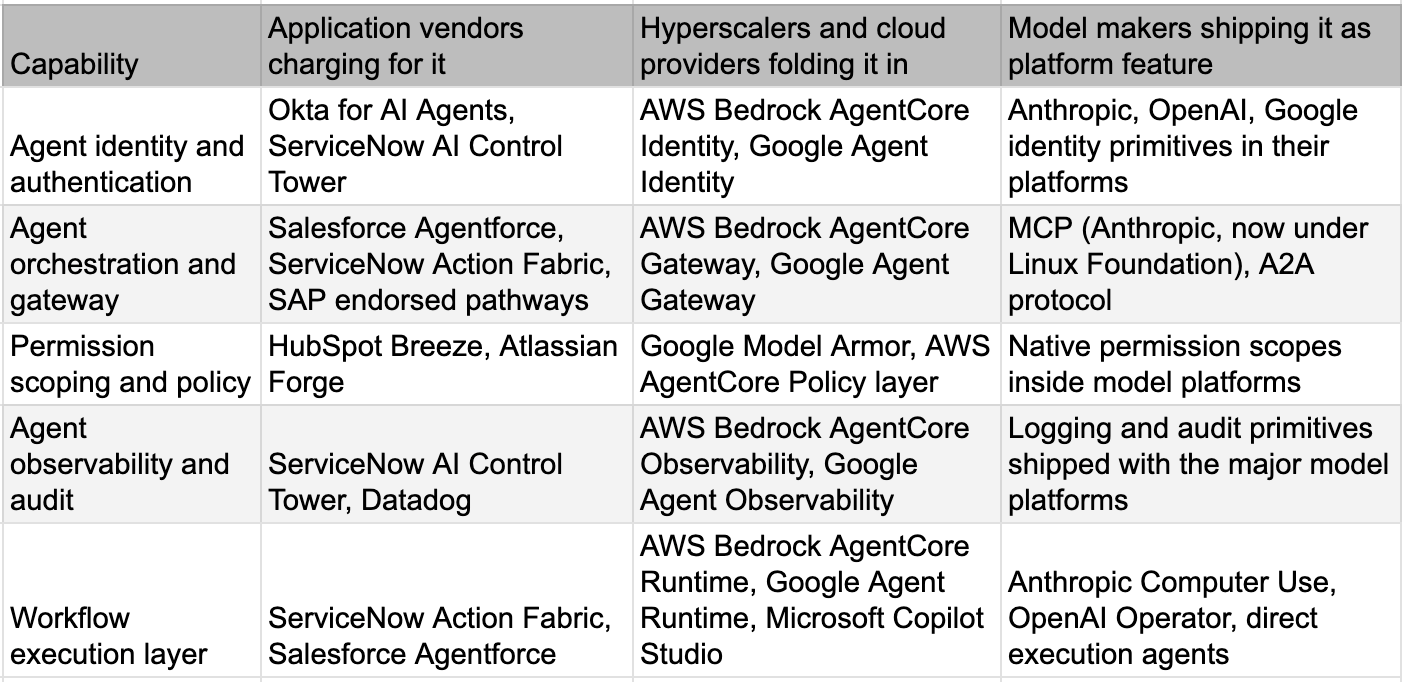

That part of the story was visible six months ago. What is newer and structurally more important is who else has now climbed into the same layer.

The cloud hyperscalers and the major model makers have been building agent identity, agent gateways, agent runtimes, and agent observability throughout 2026. On the surface, the moves look identical to what the application vendors are doing. Underneath, the motivation is the opposite. The hyperscalers and model makers are not desperate for the few cents per agent operation. They are racing to make themselves the default infrastructure layer for agent governance, partly to defend their cloud share and partly to capture the workloads that will sit on top.

Same capability. Three different motivations. The first column needs the revenue. The second column wants the workload. The third column wants the adoption. Only the first is compatible with the tollbooth strategy.

When AWS folds agent identity, gateway, memory, and observability into Bedrock AgentCore as billed-but-modest infrastructure layers, the application vendor selling the same capability at premium prices is in a different position than it was the day before. When Google ships Agent Identity, Agent Gateway, and Model Armor as core platform features inside the Gemini Enterprise Agent Platform, the same thing happens again. When Anthropic donates the Model Context Protocol to the Linux Foundation, and the largest model and cloud companies in the world co-found a standards body to govern it together, the application vendor's proprietary checkpoint loses some of what made it proprietary.

None of this is settled. New protocols will emerge. Competing standards will fight. The agent-governance space will reshuffle through 2026 and 2027. But the direction of travel is unambiguous. The players with the deepest pockets in this market are systematically reducing the barriers that the application vendors are trying to raise.

In other words, the application vendor charging for permission is competing against a free version of the same capability, shipped by a company that has every incentive to make it free and no incentive to make it expensive. That is among the most uncomfortable competitive positions in the modern technology stack.

It is also exactly the asymmetry that turns the toll booth from a clever revenue line into a hard problem. AI as a technology wants data barriers to come down. Application vendors as commercial actors are trying to keep them up. The hyperscalers and model makers have no incentive to keep barriers up and every incentive to take them down. The customer is caught in the middle, but the customer increasingly has powerful allies above and beside it.

The Second New Competition: Products and Services Blur

The agent era is dissolving the boundary between software products and human services in a way that no previous wave of enterprise technology has achieved.

Historically, software vendors avoided services revenue. The market paid fifteen times revenue for products and one to two times for services. Pure SaaS companies pushed implementation work out to system integrators and consulting firms. The cleanest model was self-installing software with high gross margins, low marginal costs, and minimal consulting headcount. For two decades, this was the dominant template.

Agents have made the template harder to sustain. An agent is not a product in the traditional sense. It is a configured workflow that depends on deep enterprise context to function, and that context cannot easily be productized. It must be discovered, modeled, and implemented customer by customer. Vendors selling agent platforms now find themselves building services capacity faster than they ever planned. Forward-deployed engineers, solution architects, customer engineering teams, embedded consultants. The names vary. The function is the same.

At the same time, the global consulting industry is pivoting in the opposite direction. The largest firms have spent the past two years building proprietary AI tooling, packaged methodologies, and repeatable agent templates. They are selling these as products inside service engagements. The model makers are accelerating the blur from yet another angle. OpenAI's recent deployment-services arm, backed by major private equity, embeds its engineers directly inside client organizations to build bespoke agentic systems. Anthropic does enterprise deployment work alongside its model business. The largest model companies have decided they do not want to sit at the top of a stack of intermediaries. They want to be present at every layer, sometimes as a product, sometimes as a service, sometimes as a partner to incumbents, and sometimes as a direct competitor to them.

What this means commercially is that everyone is now competing on a different axis from the one on which their valuation was built. Pure SaaS companies are absorbing services revenue at lower margins than their multiple for pure software assumes. Service companies are competing against their largest software customers. Model makers are competing with both. The blur is not a temporary phase. It is the new structure, and the structure carries lower aggregate margins than any of its participants are currently valued on.

A vendor trying to defend its old multiple by layering new access fees on top of existing licenses is fighting two battles at once. The fee draws competitive attention. The blurring of products and services compresses margins regardless of how much it collects.

The Third New Competition: Customers Themselves

The most under-priced source of pressure on enterprise software may be the customer's own engineering team.

For thirty years, the cost of building enterprise software in-house was high enough to be prohibitive. The assumption that no large company could economically build its own CRM, its own ERP, its own service management platform, its own data warehouse, was the foundation of the entire SaaS business model. CIOs would tell their boards every five years that they could build it cheaper internally. They would be wrong every time. The vendor's moat was not the software. It was the cumulative cost and risk of building the software, plus the cost of maintaining it, plus the difficulty of attracting and retaining the engineering talent to do both.

That moat has not disappeared. But it is considerably narrower than it was three years ago.

The cost of building software has fallen sharply in the past eighteen months. Generative coding tools have moved from novelties to default infrastructure inside engineering organizations. The skill floor for building meaningful enterprise tooling has dropped by an order of magnitude. The capability to maintain and extend such tooling, which used to be the real long-tail cost, has become meaningfully cheaper because AI now handles much of the maintenance burden. None of this means every enterprise will build everything in-house. Most will not. But more will build, configure, or extend specific capabilities than at any prior point in the SaaS era.

The pressure shows up at the margin. A customer faced with a new permission tollbooth no longer asks only whether to pay or switch. The customer now asks whether to build a substitute. Many will conclude that a thin permission layer built on top of open protocols is a reasonable investment compared with perpetual metered access fees. Many will conclude that orchestration glue is exactly the kind of work AI-equipped internal engineers can now handle. Many will conclude that the value of optionality, of not committing to a vendor's tollbooth, is worth more than the marginal cost of building around it.

The build threat does not work the same way everywhere. Some workflows touch deep, vendor-specific data schemas that internal teams cannot easily replicate. AI may even strengthen vendor stickiness in those cases, because agents trained against proprietary schemas become harder to migrate, not easier. The customer that builds heavily against Salesforce or Workday or SAP data may find itself more tethered to those vendors, not less. The build pressure operates on the orchestration and permission layers more than on the systems of record beneath them. But that is still where most of the new tollbooths are being built.

This is happening at exactly the moment when customers are also signing shorter contracts. Combine the two and the bargaining position at every renewal shifts toward the buyer. The buyer can credibly raise the possibility of building. The buyer can credibly raise the possibility of switching. The buyer can credibly slow-walk a renewal. The vendor's main counter is to soften the price, which is the opposite of what the tollbooth strategy promised.

There is also a regulatory backdrop now in place that did not exist eighteen months ago. The European Data Act, in effect since September 2025, gives customers a legally enforceable right to access their own data and to share it with third parties on fair terms. Switching charges between data-processing providers are capped to direct costs through January 2027, after which they are banned entirely. The legal scaffolding to challenge data tollbooths is written and operational. Once one major customer raises a formal complaint, others tend to follow.

None of this means SaaS dies. Vendors will adapt. Customers will compromise. Contracts will reset. The right word for the trajectory is not collapse. It is pressure. Pressure at the margin. Renewals at lower terms. New logos at slower rates than the narrative implies. Pricing power that fades quarter by quarter rather than crashing. The product survives. The compounding becomes harder to sustain.

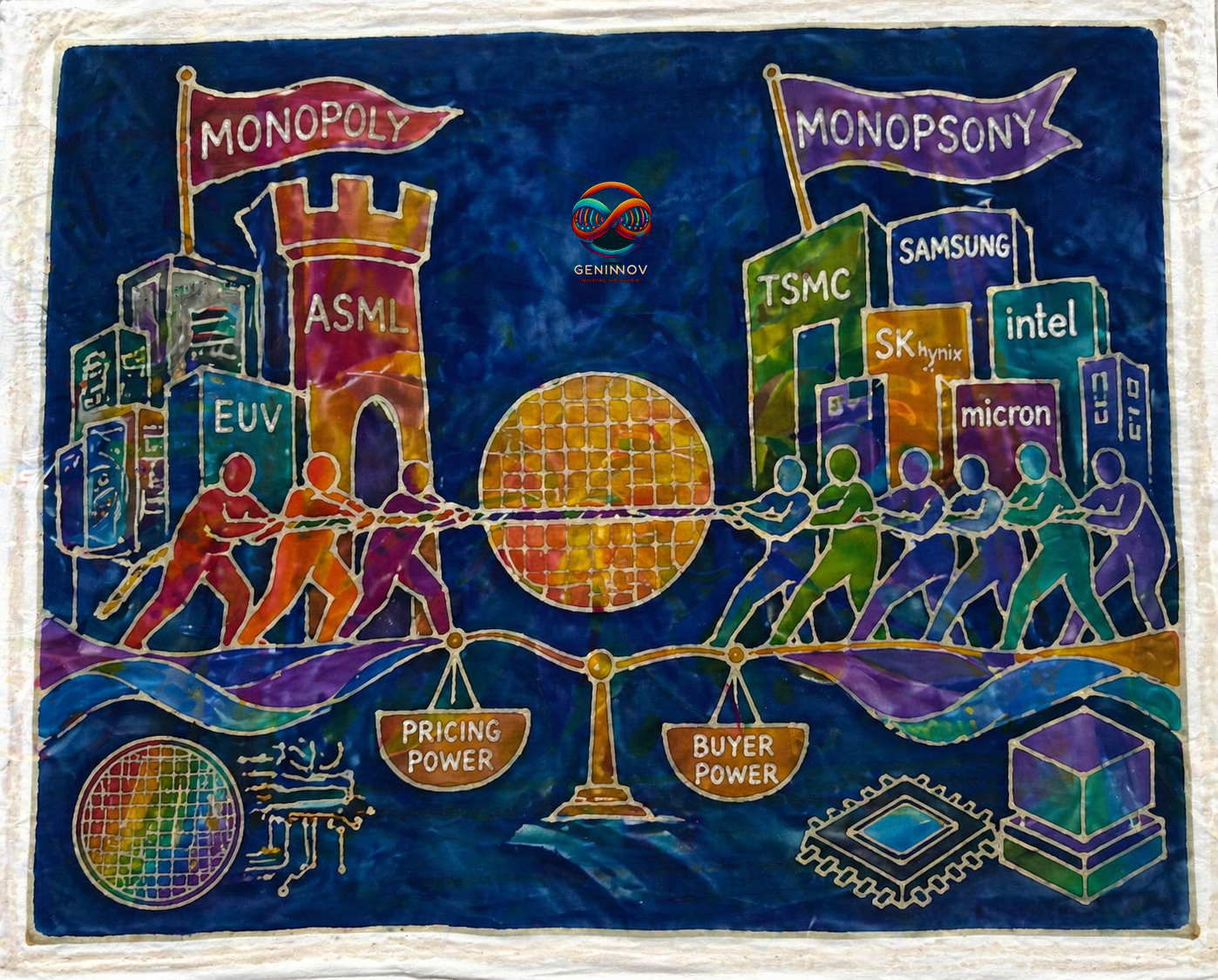

Why This Looks Nothing Like Silicon

Nobody likes paying higher prices. When an enterprise is faced with sharply rising prices from its hardware and infrastructure providers, new vendors in LLM-makers, and traditional partners from software and services companies, the need to think of alternatives rises. Yes, the CTOs may be able to convince their management to reduce some other expenditures to allocate more for technology, and even accelerate human resource restructuring to generate additional amounts for technology spend, but for most players, paying the higher amounts to everyone asking for more is not a viable option.

This is where the internal dynamics of these industries matter. That’s what will decide who can get away with higher asks easily. And, that’s where the economic dynamics of software in the agent era are nothing like the economic dynamics of silicon or those of the model-makers. We will not repeat the obvious about the supply chokeholds in the silicon space. Pricing power of the most capable model-makers is also easier to understand, notwithstanding the continuously changing nature of the competitive landscape as well as the competition provided by the open-source. The model-makers, at least, operate amid an exploding TAM.

Software is the opposite. The cost of producing software is collapsing. Substitutes proliferate. Internal teams can build. Adjacent vendors can pivot. Open standards arrive with the explicit purpose of neutralizing tollbooths. Every major enterprise software vendor now competes with every other one in the same agent layer. The hyperscalers are bundling the same capability into infrastructure. The model makers are commoditizing it from above. The customer's own engineering team is arming itself with generative tools and looking for more in-house work to ensure its own stability.

The pricing announcements of the past sixty days will produce a near-term revenue boost. Some will land. Some will hold for a year or two. A few may stick longer. None of them, however, looks likely to compound the way 2018-vintage SaaS economics compounded.

In a world where the silicon shock continues to push costs and prices up across the stack, software vendors look at first glance like obvious beneficiaries from the new strategies they are embarking on. The closer look suggests the opposite. Their tollbooths are real. So is the road. So, increasingly, is the customer's ability to take a different one.